Technical Implementation

The preceding sections explained the necessity for a universal, secure, and inclusive proof of personhood mechanism. Additionally, they discussed why iris biometrics appears to be the sole feasible path for such a PoP mechanism. The realization via the Orb and World ID has also been explained on a high level. The subsequent section dives deeper into the specifics of the architectural design and implementation of both the Orb and World ID.

Architecture Overview

To get a World ID, an individual begins by downloading the World App. The app stores their World ID and enables them to use it across multiple platforms and services. The World App is user-friendly, particularly geared towards crypto beginners, and offers simple financial features based on decentralized finance: allowing users to on- and off-ramp, subject to the availability of providers, swap tokens through a decentralized exchange, and connect with dApps through WalletConnect. Importantly, the system allows other developers to create their own clients without seeking permission, meaning there can be various apps supporting World ID.

Once verified through the Orb, individuals are issued a World ID, a privacy-preserving proof-of-personhood credential. Through World ID, they can claim a set amount of WLD periodically (Worldcoin Grants), where laws allow. World ID can also be used to authenticate as human with other services (e.g., prevent user manipulation in the case of voting). In the future, other credentials can be issued on the Worldcoin Protocol as well.

To make World ID and the Worldcoin Protocol easy to use, an open source Software Development Kit (SDK) is available to simplify interactions for both Web3 and Web2 applications. The World ID software development kit (SDK) is the set of tools, libraries, APIs, and documentation that accompanies the Protocol. Developers can use the SDK to leverage World ID in their applications. The SDK makes web, mobile, and on-chain integrations fast and simple; it includes tools like a web widget (JS), developer portal, development simulator, examples, and guides.

The Orb

Previous sections discussed why a custom hardware device using iris biometrics is the only approach to ensure inclusivity (i.e. everyone can sign up regardless of their location or background) and fraud resistance, promoting fairness for all participants. This section discusses the engineering details of the Orb, which was first prototyped and developed by Tools for Humanity.

Why Custom Hardware is Needed

It would have been significantly easier to use off the shelf available devices like smartphones or iris imaging devices. However, neither is suitable for uncontrolled and adversarial environments in the presence of significant incentives. To reliably distinguish people, only iris biometrics are suitable for this globally scalable use case . To enable maximum accuracy, device integrity, spoof prevention as well as privacy, a custom device is necessary. The reasoning is described in the following section.

#In terms of the biometric verification itself, the fastest and most scalable path would be to use smartphones. However, there are several key challenges with this approach. First, smartphone cameras are insufficient for iris biometrics due to their low resolution across the iris, which decreases accuracy. Further, imaging in the visible spectrum can result in specular reflections on the lens covering the iris and low reflectivity of brown eyes (most of the population) introduces noise. The Orb captures high quality iris images with more than an order of magnitude higher resolution compared to iris recognition standards. This is enabled by a custom, narrow field-of-view camera system. Importantly, images are captured in the near infrared spectrum to reduce environmental influences like different light sources and specular reflections. More details on the Orb’s imaging system can be found in the following sections.

Second, the achievable security bar is very low. For PoP, the important part is not identification (i.e. “Is someone who they claim they are?”), but rather proving that someone has not verified yet (i.e. “Is this person already registered?”). A successful attack on a PoP system does not necessitate the attacker’s impersonation of an existing individual, which is a challenging requirement that would be needed to unlock someone's phone. It merely requires the attacker to look different from everyone who has registered so far. Phones and existing iris cameras are missing multi-angle and multi-spectral cameras as well as active illumination to detect so-called presentation attacks (i.e. spoof attempts) with high confidence. A widely-viewed video demonstrating an effective method for spoofing Samsung’s iris recognition illustrates how straightforward such an attack could be in the absence of capable hardware.

Further, a trusted execution environment would need to be established in order to ensure that verifications originated from legitimate devices (not emulators). While some smartphones contain dedicated hardware for performing such actions (e.g., the Secure Enclave on the iPhone, or the Titan M chip on the Pixel), most smartphones worldwide do not have the hardware necessary to verify the integrity of the execution environment. Without those security features, basically no security can be provided and spoofing the image capture as well as the enrollment request is straightforward for a capable attacker. This would allow anyone to generate an arbitrary number of synthetic verifications.

Similarly, no off-the-shelf hardware for iris recognition met the requirements that were necessary for a global proof of personhood. The main challenge is that the device needs to operate in untrusted environments which poses very different requirements than e.g. access control or border control where the device is operated in trusted environments by trusted personnel. This significantly increases the requirements for both spoof prevention as well as hardware and software security. Most devices lack multi-angle and multispectral imaging sensors for high confidence spoof detection. Further, to enable high security spoof detection, a significant amount of local compute on the device is needed, without the ability to intercept data transmission, which is not the case for most iris scanners. A custom device enables full control over the design. This includes tamper detection that can deactivate the device upon intrusion, firmware that is designed for security to make unauthorized access very difficult, as well as the possibility to update the firmware down to the bootloader via over the air updates. All iris codes generated by an Orb are signed by a secure element to make sure they originate from a legitimately provisioned Orb, instead of, for example, an attacker’s laptop. Further, the computing unit of the Orb is capable of running multiple real-time neural networks on the five camera streams (mentioned in the last section). This processing is used for real time image capture optimization as well as spoof detection. Additionally, this enables maximum privacy by processing all images on the device such that no iris images need to be stored by the verifier.

While no hardware system interacting with the physical world can achieve perfect security, the Orb is designed to set a high bar, particularly in defending against scalable attacks. The anti-fraud measures integrated into the Orb are constantly refined. Several teams at Tools for Humanity are continuously working on increasing the accuracy and sophistication of the liveness algorithms. An internal red team is probing various attack vectors. In the near future, the red teaming will extend to external collaborators including through a bug bounty program.

Lastly, the correlation between image quality and biometric accuracy is well established, and it is expected that deep learning will benefit even more from increased image quality. Given the goal of reducing error rates as much as possible to achieve maximum inclusivity, the image quality of most devices was insufficient.

Since commercially available iris imaging devices did not meet the image quality or security needs, Tools for Humanity dedicated several years to developing a custom biometric verification device (the Orb) to enable universal access to the global economy in the most inclusive manner possible.

Hardware

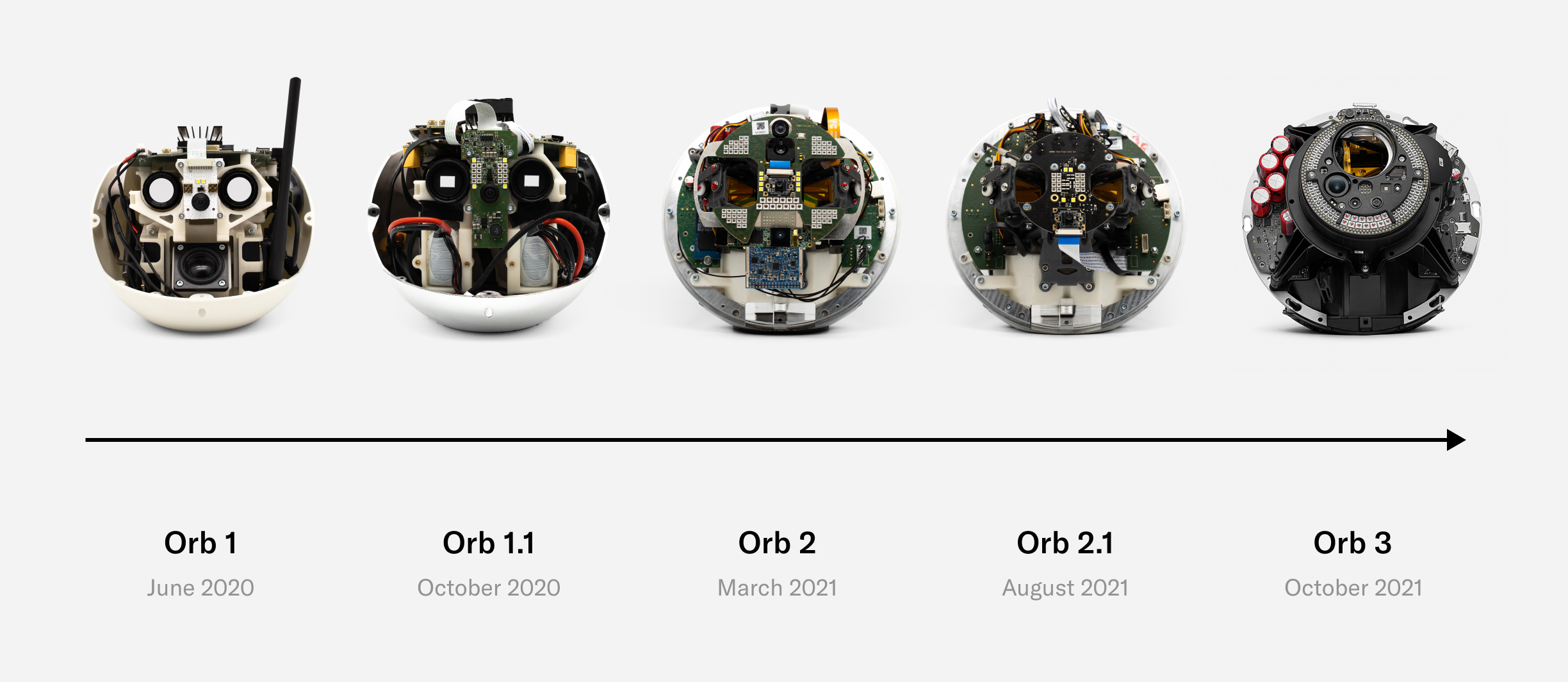

Three years of R&D, including one year of small-scale field testing and one year of transition to manufacturing at scale, have led to the current version of the Orb, which is being open sourced. Feedback for design improvements is welcome and highly encouraged. The remainder of this section will go through a teardown of the Orb, with a few engineering anecdotes included.

Today’s Orb represents a precise balance of development speed, compactness, user experience, cost and at-scale production with minimal compromise being made on imaging quality and security. There will likely be future versions that are optimized even further both by Tools for Humanity and other companies as the Worldcoin ecosystem decentralizes. However, the current version represents a key milestone that enables scaling the Worldcoin project.

The following takes the reader through some of the most important engineering details of the Orb, as well as how the imaging system works. For security purposes, only tamper detection mechanisms that are meant to catch intrusion attempts are left out.

Design

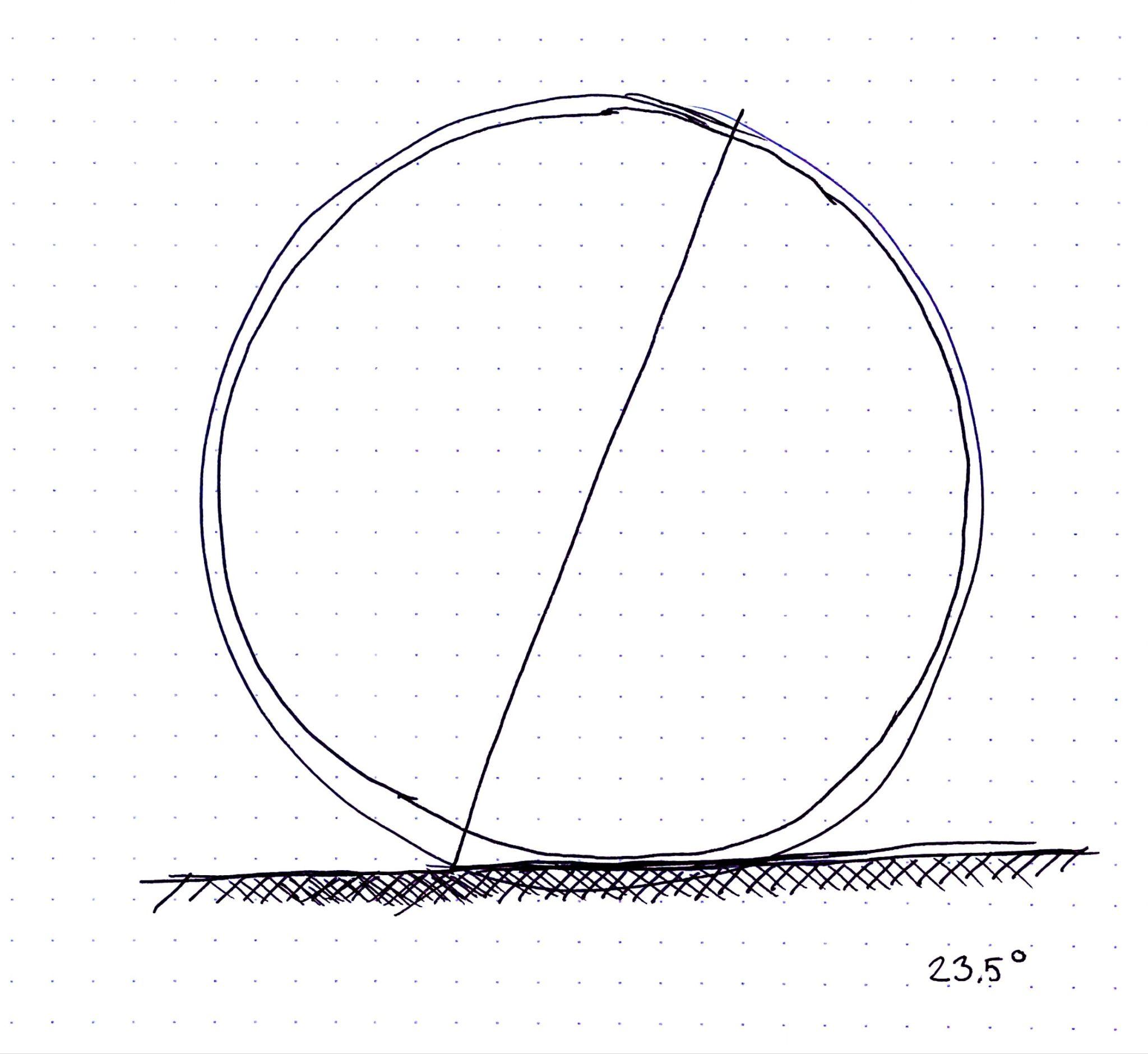

Fundamental to the development of the Orb was its design. A spherical shape is an engineering challenge. However, it was important for the design of the Orb to reflect the values of the Worldcoin project. The spherical shape stands for Earth, which is home to all. Similarly the Orb is tilted at 23.5 degrees, the same degree at which the Earth is tilted relative to its orbital plane around the sun. There’s even a 2mm thick clear shell on the outside of the Orb which protects the Orb just like the atmosphere protects Earth. The resemblance of Earth symbolizes that the Worldcoin project is meant to give everyone the opportunity to participate, regardless of their background and the Orb and its use of biometrics is a reflection of that since nothing is required other than being human.

Mechanics

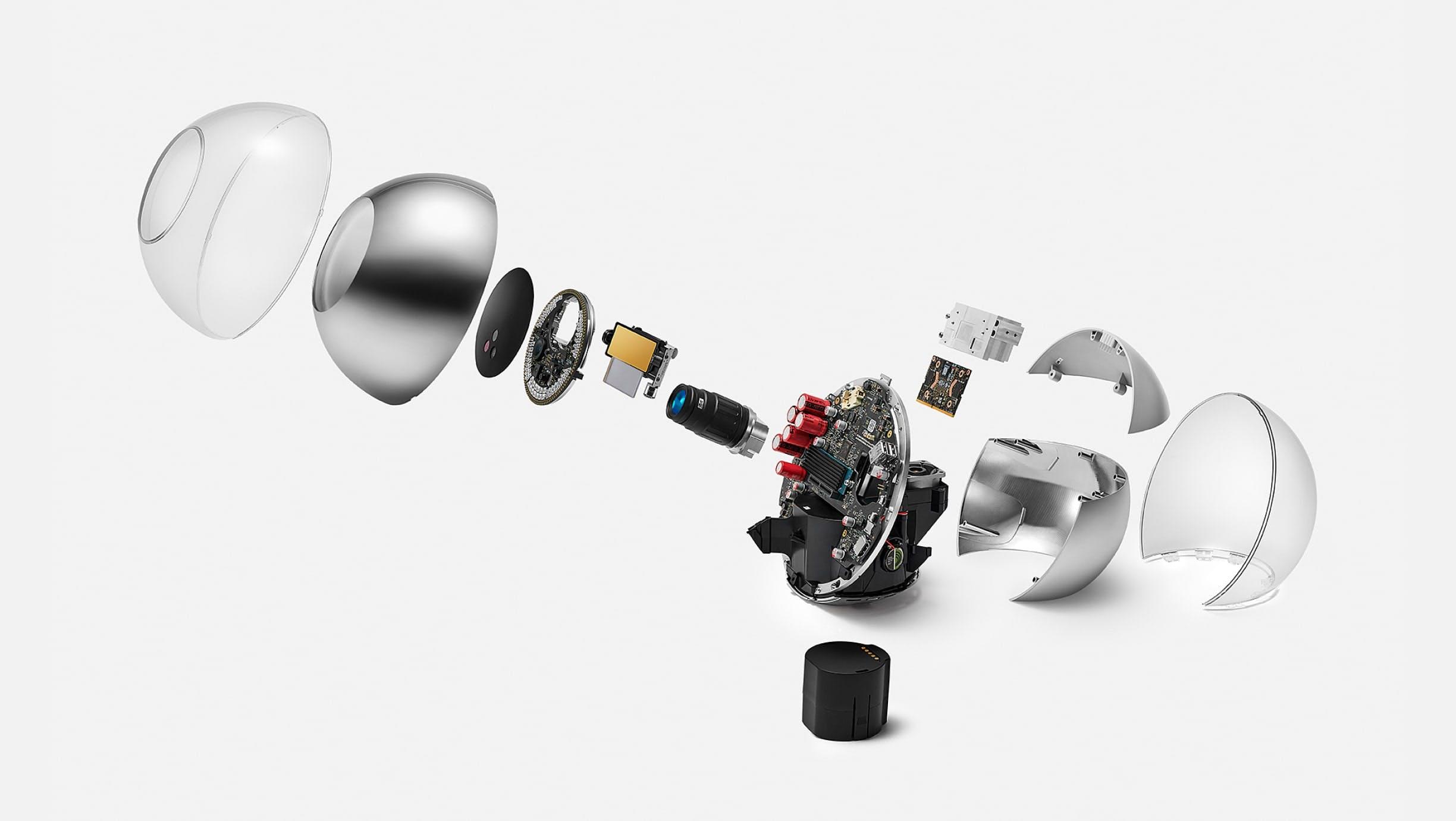

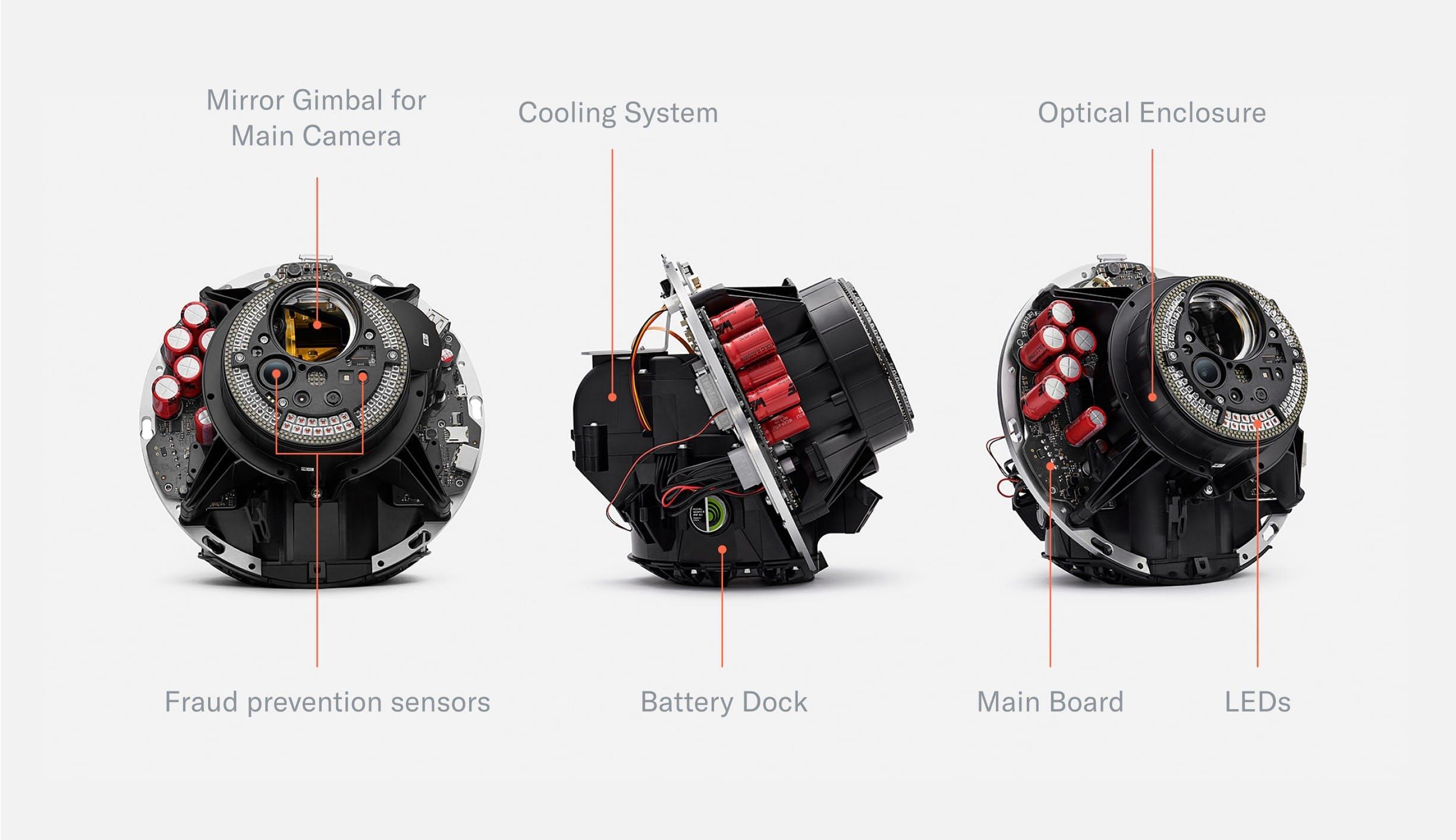

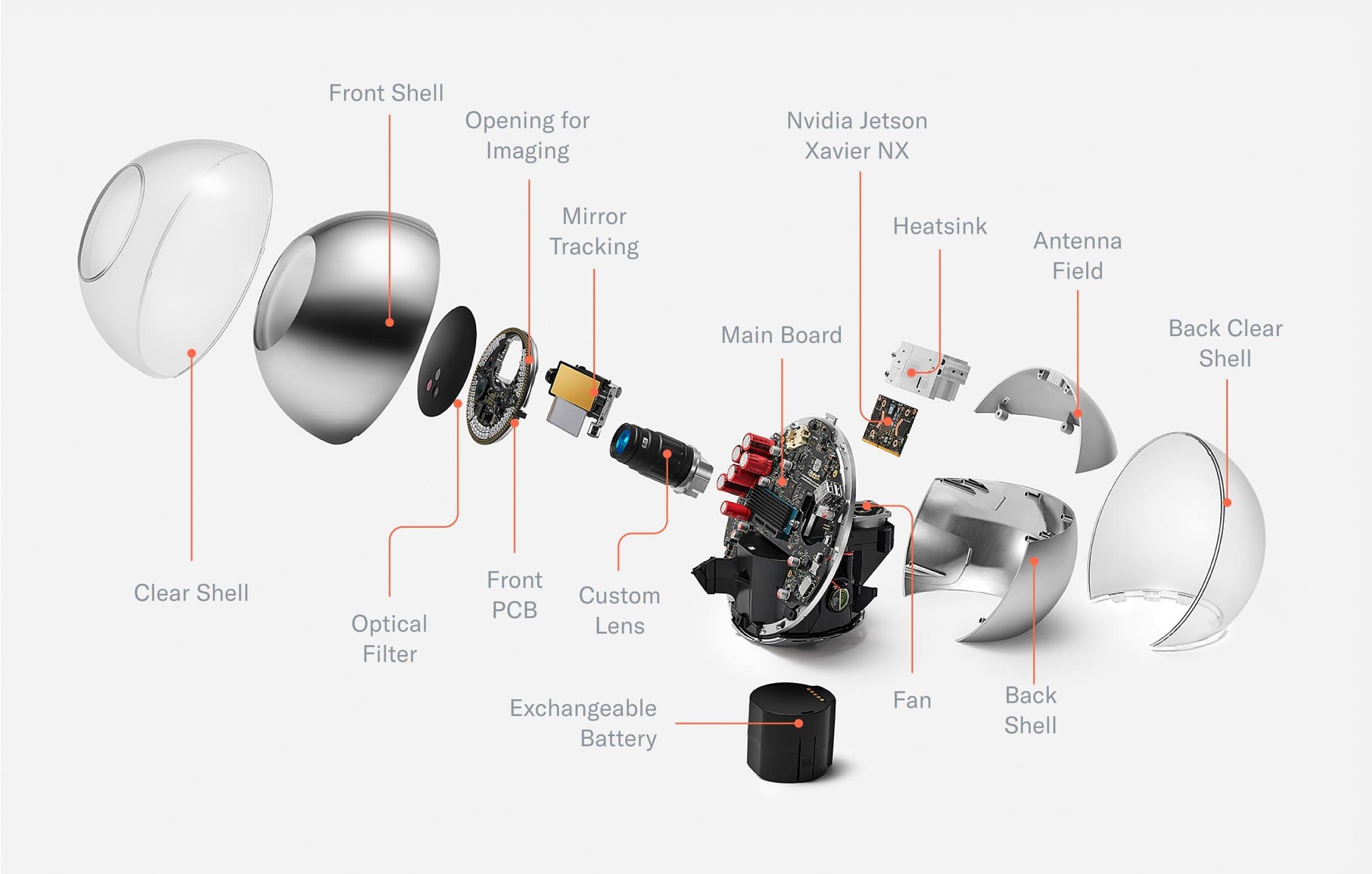

When removing the shell, the mainboard, optical system and cooling system become visible. Most of the optical system is hidden in an enclosure that, together with the shell, forms a dust- and water-resistant environment to enable long-term use even in challenging environments.

The Orb consists of two hemispheres separated by the mainboard which is tilted at 23.5°—the angle of the rotational axis of the earth. The mainboard holds a powerful computing unit to enable local processing for maximum privacy. The frontal half of the Orb is dedicated to the sealed optical system. The optical system consists of several multispectral senso$rs to verify liveness and a 2D gimbal-enabled narrow field of view camera to capture high resolution iris images. The other hemisphere is dedicated to the cooling system as well as speakers. An exchangeable battery can be inserted from the bottom to enable uninterrupted operation in a mobile setting.

Once the shell is removed, the Orb can be divided into four core parts:

- Front: The optical system

- Middle: The mainboard separates the device into two hemispheres

- Back: The main computing unit as well as the active cooling system

- Bottom: An exchangeable battery

With the housing material removed (e.g. the dust-proof enclosure of the optical system), all relevant components of the Orb become visible. This includes the custom lens, which is optimized for both near infrared imaging and fast, durable autofocus. The front of the optical system is sealed by an optical filter to keep dust out and minimize noise from the visible spectrum to optimize image quality. In the back, a plastic component in the otherwise chrome shell allows for optimized antenna placement. The chrome shell is covered by a clear shell to avoid deterioration of the coating over time.

First prototypes were tested outside the lab as early as possible. Naturally, this taught the team many lessons, including:

Optical System

With the first prototype, the signup experience was notoriously difficult. Over the course of a year the optical system was upgraded with autofocus and eye tracking such that alignment becomes trivial when the person is within an arm's length of the Orb.

Battery

No off-the-shelf battery would last for a full day on a single charge. A custom exchangeable battery was designed based on 18650 Li-Ion cells—the same form factor as the cells used in modern electric cars. The battery consists of 8 cells with 3.7V nominal voltage in a 4S2P configuration (14.8V) with a capacity of close to 100Wh, which is a limit imposed by regulations related to logistics. Now there’s no limit to Orb uptime.

The Orb’s custom battery is made of Li-Ion 18650 cells (the same cells used in many electric cars). With close to 100Wh, the capacity is optimized for battery lifetime while complying with transportation regulations. A USB-C connector makes recharging convenient.

Shell

The coating of the shell sometimes deteriorated in the handheld use case. Therefore, a 2mm clear shell was added to both optimize the design as well as protect the chrome coating from scratches and other wear.

UX LEDs

To make the user experience more intuitive, especially in loud environments where a person might not be able to hear sound feedback, an LED ring was added to help guide people through the sign-up process. Similarly, status LEDs were exposed next to the only button on the Orb to indicate its current state.

Optical System

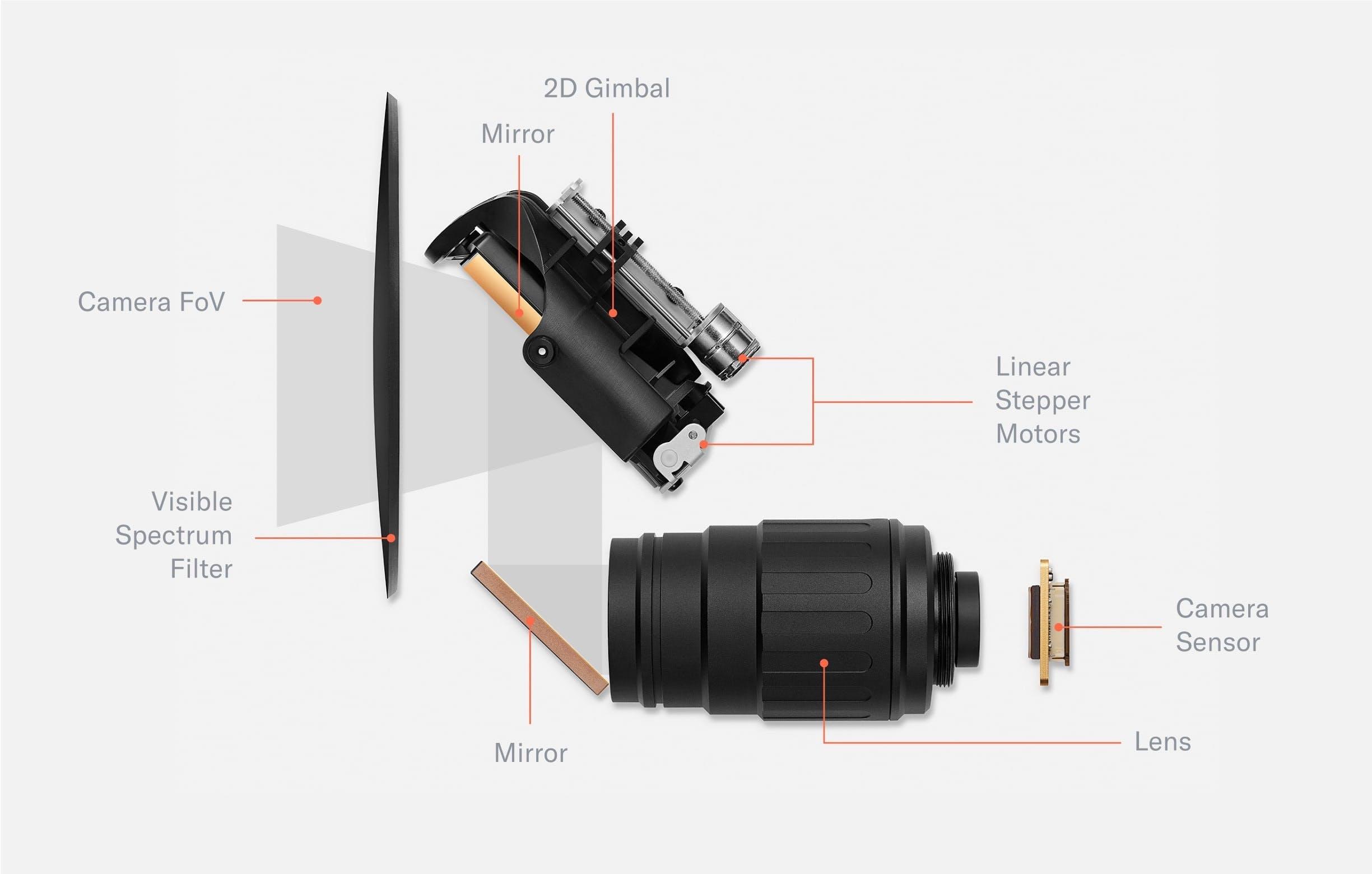

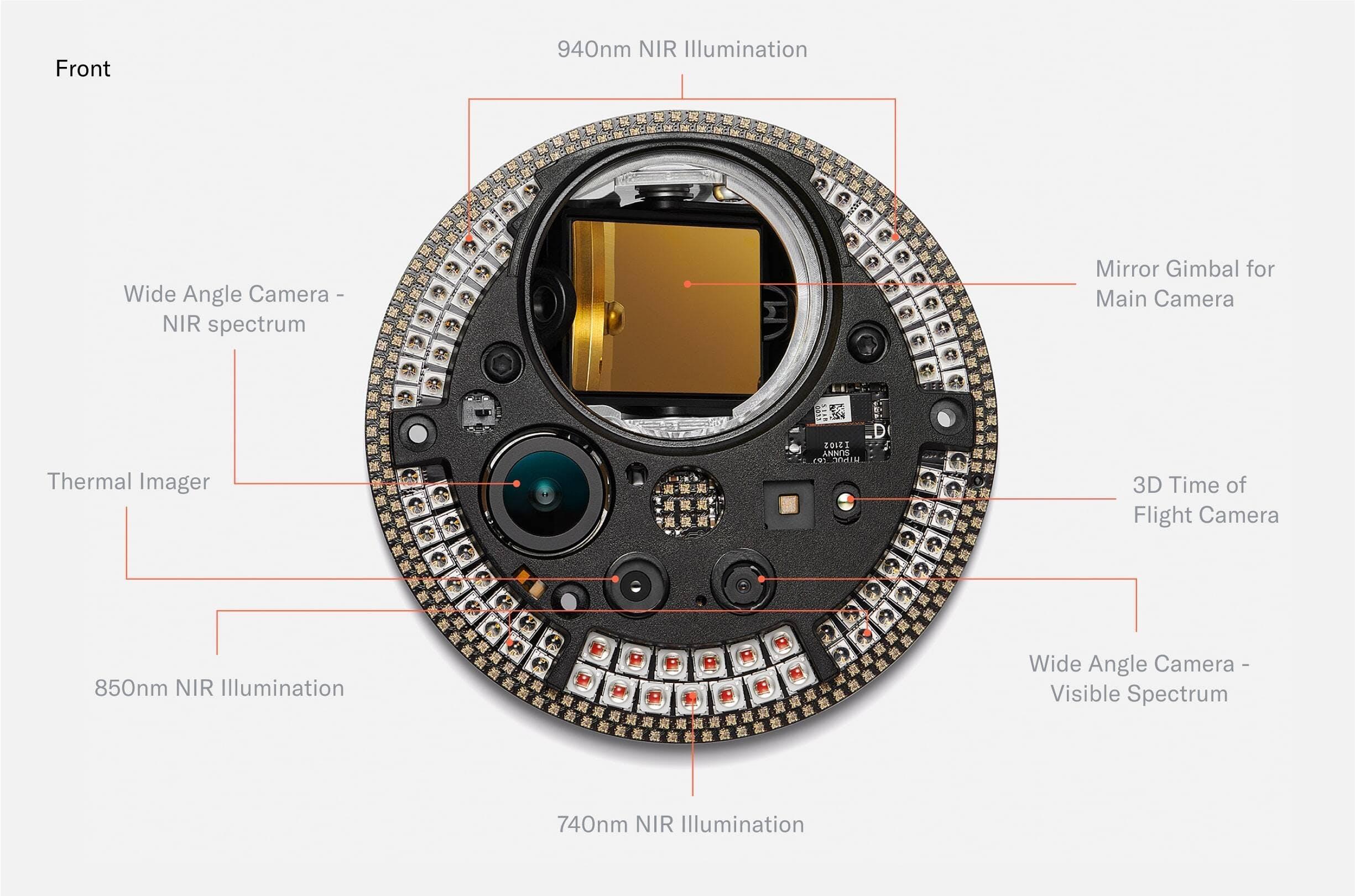

Early field tests showed that the verification experience needed to be even simpler than anticipated. To do this, the team first experimented with many approaches featuring mirrors that allowed people to use their reflection to align with the Orbs imaging system. However, designs that worked well in the lab quickly broke down in the real world. The team ended up building a two-camera system featuring a wide angle camera and a telephoto camera with an adjustable ~5° field of view by means of a 2D gimbal. This increased the spatial volume in which a signup can be successfully completed by several orders of magnitude, from a tiny box of 20x10x5mm for each eye to a large cone.

The main imaging system of the Orb consists of a telephoto lens and 2D gimbal mirror system, a global shutter camera sensor and an optical filter. The movable mirror increases the field of view of the camera system by more than two orders of magnitude. The optical unit is sealed by a black, visible spectrum filter which seals the high precision optics from dust and only transmits near infrared light. The image capture process is controlled by several neural networks.

The wide angle camera captures the scene, and a neural network predicts the location of both eyes. Through geometrical inference, the field of view of the telephoto camera is steered to the location of an eye to capture a high resolution image of the iris, which is further processed by the Orb into an iris code.

Beyond simplicity, the image quality was the main focus. The correlation between image quality and biometric accuracy is well established.

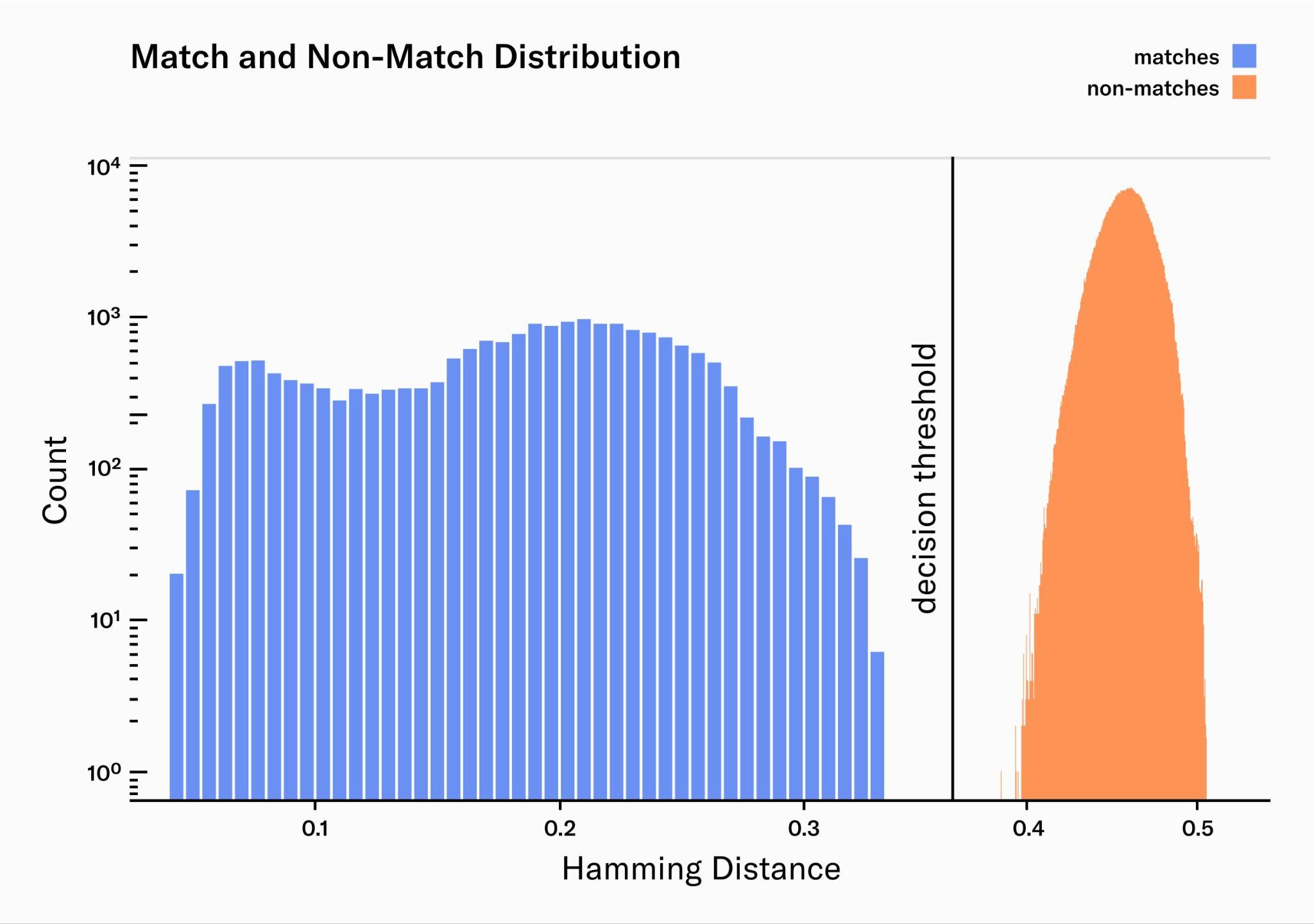

Here pairwise comparisons are plotted: the match distribution for pairs of the same identity (blue) and non-match distribution for pairs of different identity (red). In a perfect system, the match-distribution would be a very narrow peak at zero. However, multiple sources of error widen the distribution, leading to more overlap with the non-match distribution and therefore increasing False Match and False Non-Match rates. High quality image acquisition narrows the match-distribution significantly and therefore minimizes errors. The width of the non-match distribution is determined by the amount of information that is captured by the biometric algorithm: the more information is encoded in the embeddings the narrower the distribution.

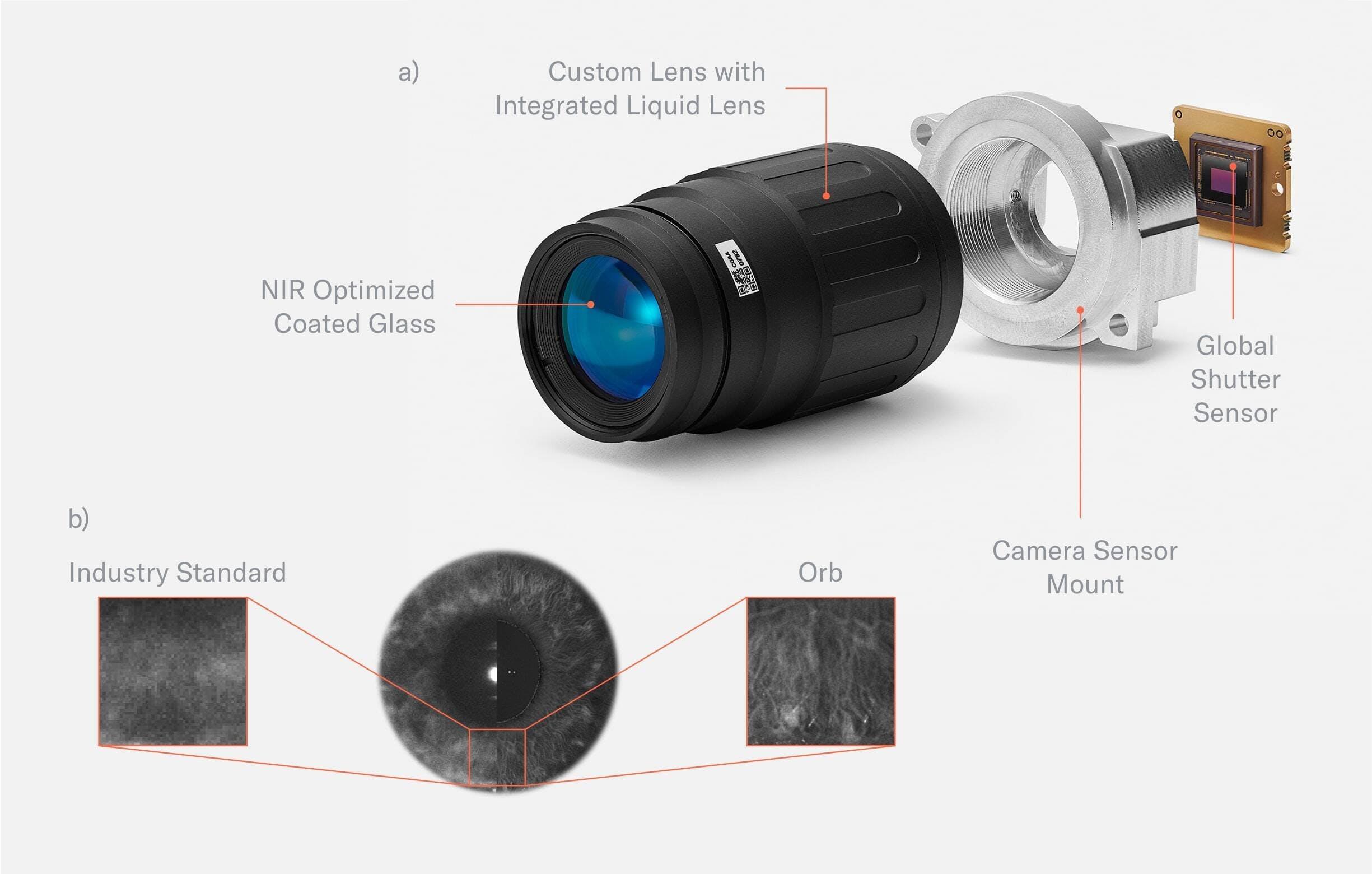

Many off-the-shelf products have been tested but there wasn’t any lens compact enough to meet the imaging requirements while still being affordable. Therefore, the team partnered with a well known specialist in the machine vision industry to build a customized lens. The lens is optimized for the near infrared spectrum and has an integrated custom liquid lens which allows for neural network controlled millisecond-autofocus. It is paired with a global shutter sensor to capture high resolution, distortion free images.

Fig. 3.10:

a) Custom telephoto lens. The telephoto lens was custom designed for the Orb. The glass is coated to optimize image capture in the near infrared spectrum. An integrated liquid lens allows for durable millisecond autofocus. The position of the liquid lens is controlled by a neural network to optimize focus. To capture images free of motion blur, the global shutter sensor is synchronized with pulsed illumination.

b) A comparison of the image quality of the Worldcoin Orb vs. the industry standard clearly show the advancements made in the space. The camera and the corresponding pulsed infrared illumination are synchronized to minimize motion blur and suppress the influence of sunlight. This way, the Orb creates lab environment conditions for imaging, no matter its location. Needless to say, the infrared illumination is compliant with eye safe standards (such as EN 62471:2008).

Image quality was the one thing never compromised no matter how difficult it was. In terms of resolution the Orb is orders of magnitude above the industry standard. This provides the basis for the lowest error rates possible to, in turn, maximize the inclusivity of the system.

Electronics

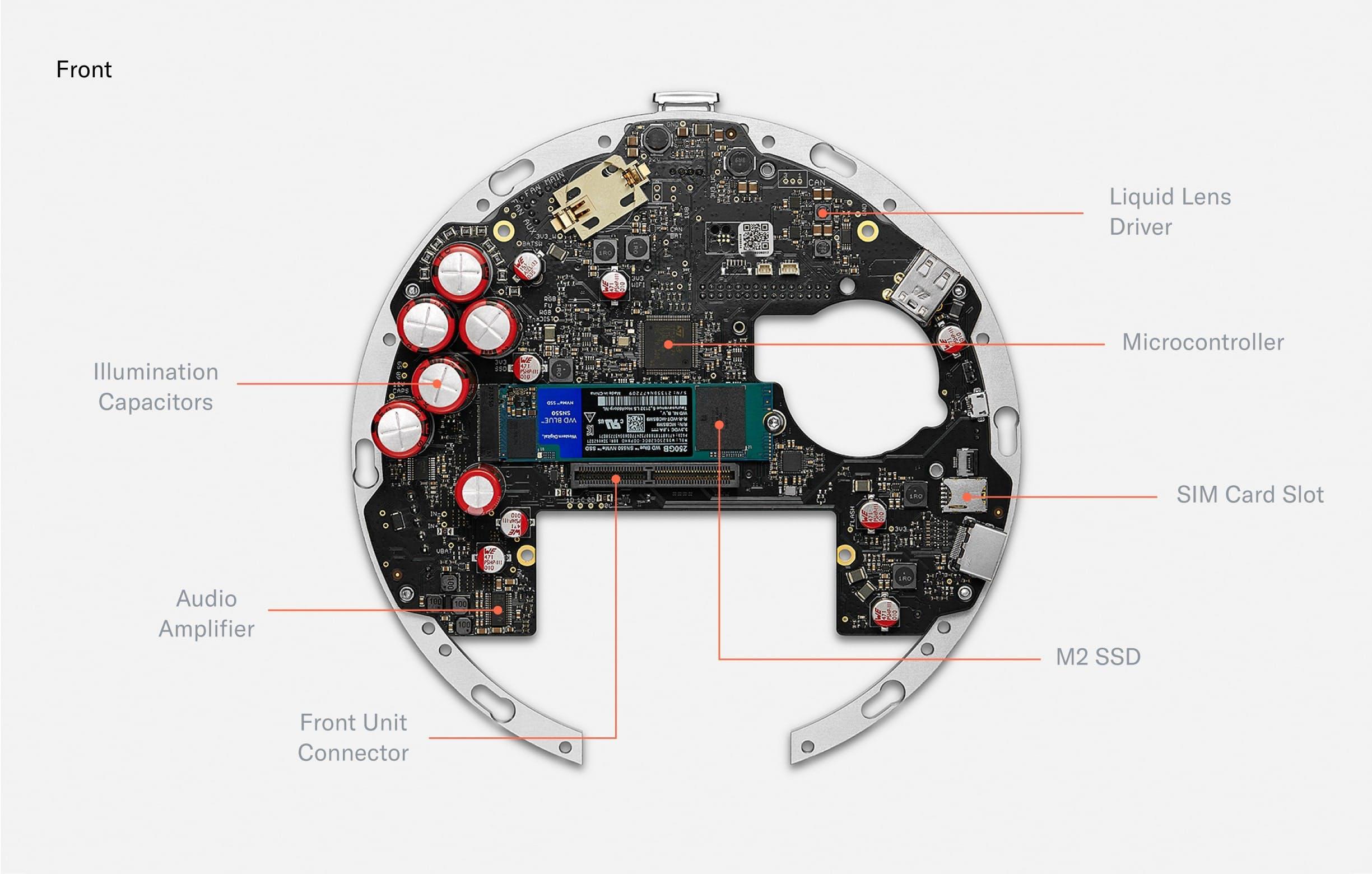

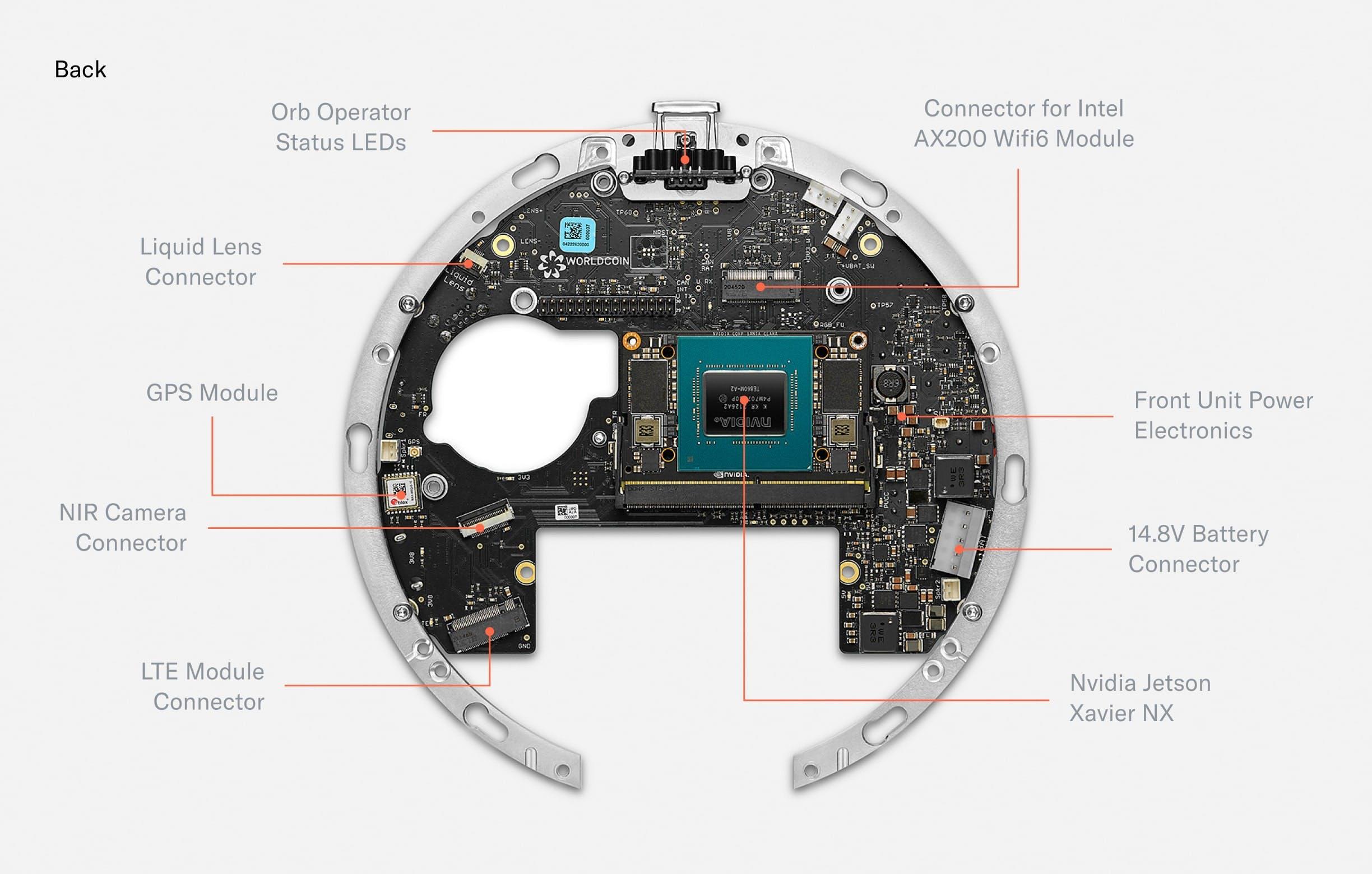

When disassembling the Orb further, several PCBs (Printed Circuit Boards) are visible, including the front PCB containing all illumination, the security PCB for intrusion detection and the bridge PCB which connects the front PCB with the largest PCB: the mainboard.

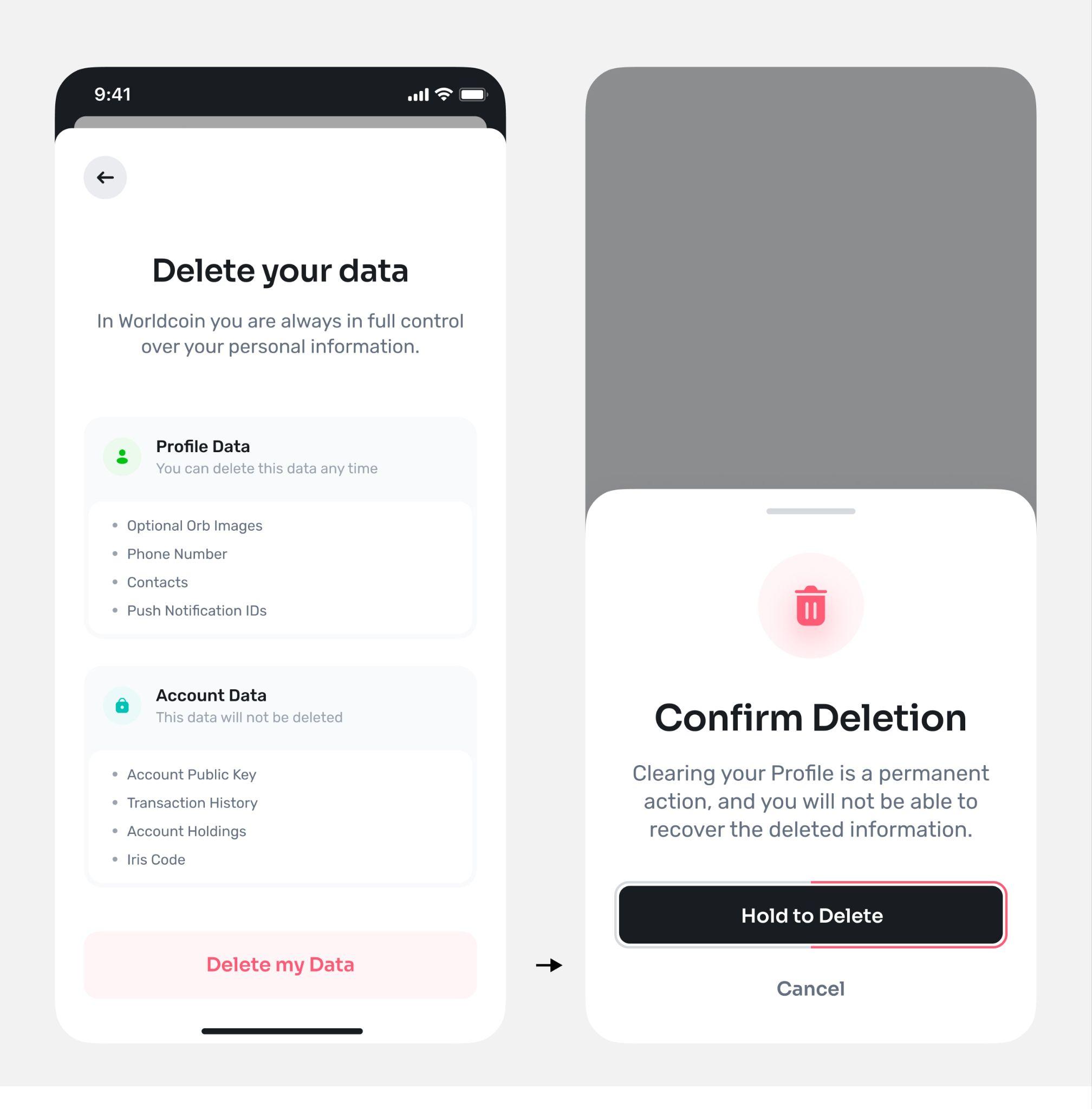

The front of the mainboard holds capacitors to power the pulsed, near infrared illumination (certified eye safe). There are also drivers to power the deformation of the liquid lens in the optical system. A microcontroller controls precise timing of the peripherals. An encrypted M.2 SSD can be used to store images for voluntary data custody and image data collection. Those images are secured by a second layer of asymmetric encryption such that the Orb can only encrypt, but cannot decrypt. The contribution of data is optional and data deletion can be requested at any point in time through the World App. A SIM card slot enables optional LTE connectivity.

Fig. 3.

The back of the mainboard holds several connectors for active elements of the optical system. Additionally, a GPS module enables precise location of Orbs for fraud prevention purposes. A Wi-Fi Module equips the Orb with the possibility to upload iris codes to make sure every person can only sign up once. Finally, the mainboard hosts a Nvidia Jetson Xavier NX which runs multiple neural networks in real time to optimize image capture, perform local anti-spoof detection and calculate the iris code locally to maximize privacy.

The mainboard acts as a custom carrier board for the Nvidia Jetson Xavier NX SoM which is the main computing unit powering the Orb. The Jetson is capable of running multiple neural networks on several camera streams in real-time to optimize image capture (autofocus, gimbal positioning, illumination, quality checks i.e. “is_eye_open”) and perform spoof detection. To optimize for privacy, images are fully processed on the device, and are only stored by Tools for Humanity if the user gives explicit consent to help improve the system.

Apart from the Jetson, the other major “plugged-in” component is a 250GB M.2 SSD. The encrypted SSD can be used to buffer images for voluntary data contribution. Images are protected by a second layer of asymmetric encryption such that the Orb can only encrypt, but cannot decrypt. The contribution of data is optional and data deletion can be requested at any point in time through the app.

Further, a STM32 microcontroller controls time-critical peripherals, sequences power, and boots the Jetson. The Orb is equipped with Wi-Fi 6 and a GPS module to locate the Orb and prevent misuse. Finally, a 12 bit liquid lens driver allows for controlling the focus plane of the telephoto lens with a precision of 0.4mm.

The most densely packed PCB of the Orb is the front PCB. It mainly consists of LEDs. The outermost RGB LEDs power the “UX LED ring.” Further inside, there are 79 near infrared LEDs of different wavelengths. The Orb uses 740nm, 850nm and 940nm LEDs to capture a multispectral image of the iris to make the uniqueness algorithm more accurate and detect spoofing attempts.

The front PCB also hosts several multispectral imaging sensors. The most basic one is the wide angle camera, which is used for steering the telephoto iris camera. Since every human can only receive one proof of personhood and Worldcoin is giving away a free share of Worldcoin to every person who chooses to verify with the Orb, the incentives for fraud are high. Therefore, further imaging sensors for fraud prevention purposes were added.

When designing the fraud prevention system, the team started from first principle reasoning: which measurable features do humans have? From there, the team experimented with many different sensors and eventually converged to a set that includes a near infrared wide angle camera, a 3D time of flight camera and a thermal camera. Importantly, the system was designed to enable maximum privacy. The computing unit of the Orb is capable of running several AI algorithms in real time which distinguish spoofing attempts from genuine humans based on the input from those sensors locally. No images are stored unless users give explicit consent to help improve the system for everyone.

Biometrics

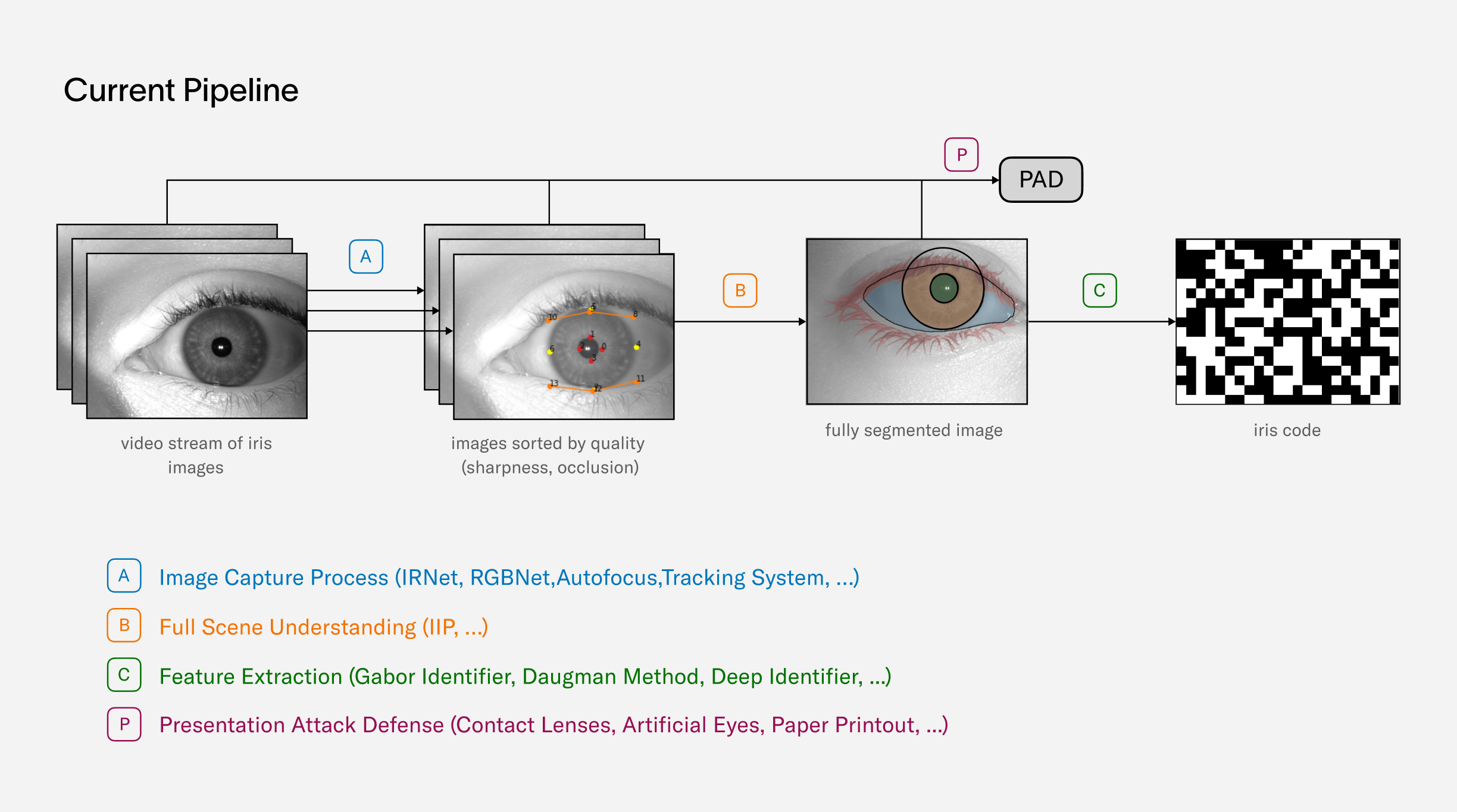

Following the exploration of iris biometrics as a choice of modality, this section provides a detailed look into the process of iris recognition from image capture to the uniqueness check:

- Biometric Performance at a Billion People Scale, addresses the scalability of iris recognition technology. It discusses the potential of this biometric modality to establish uniqueness among billions of humans, examines various operating modes and anticipated error rates and ultimately concludes the feasibility of using iris recognition at a global scale.

- Iris Feature Generation with Gabor Wavelets introduces the use of Gabor filtering for generating unique iris features, explaining the scientific principles behind this traditional method which is fundamental to understanding how iris recognition works.

- Iris Inference System explores the practical application of the previously discussed principles. This section describes the uniqueness algorithm and explains how it processes iris images to ensure accurate and scalable verification of uniqueness. This provides a comprehensive overview of the system's operation, demonstrating how theoretical principles translate into practical application.

Collectively, these sections offer a holistic overview of iris recognition, from the core scientific principles to their practical application in the Orb.

Biometric performance at a billion people scale

In order to get a rough estimation on the required performance and accuracy of a biometric algorithm operating on a billion people scale, assume a scenario with a fixed biometric model, i.e. it is never updated such that its performance values stay constant.

Failure Cases

A biometric algorithm can fail in two ways: It can either identify a person as a different person, which is called a false match or it can fail to re-identify a person although this person is already enrolled, which is called a false non match. The corresponding rates - the false match rate (FMR) and the false non match rate (FNMR) - are the two critical KPIs for any biometric system.

For the purposes of this analysis, consider three different systems with varying levels of performance.

- One of the systems, as reported by John Daugman in his paper, demonstrates a false match rate of at a false non-match rate of 0.00014.

- Another system, represented by one of the leading iris recognition algorithms from NEC, has performance values as reported in the IREX IX report and IREX X leaderboard from the National Institute for Standards and Technology (NIST). These values include a false match rate of at a false non-match rate of 0.045.

- The third system, conceived during the early ideation stage of the Worldcoin project, represents a conservative estimate of how well iris recognition could perform outside of the lab environment i.e. in an uncontrolled, outdoor setting. Despite these constraints, it anticipated a false match rate of and a false non-match rate of 0.005. While not ideal, it demonstrated that iris recognition was the most viable path for a global proof of personhood.

A more in-depth examination of how these values are obtained from various sources is also available.

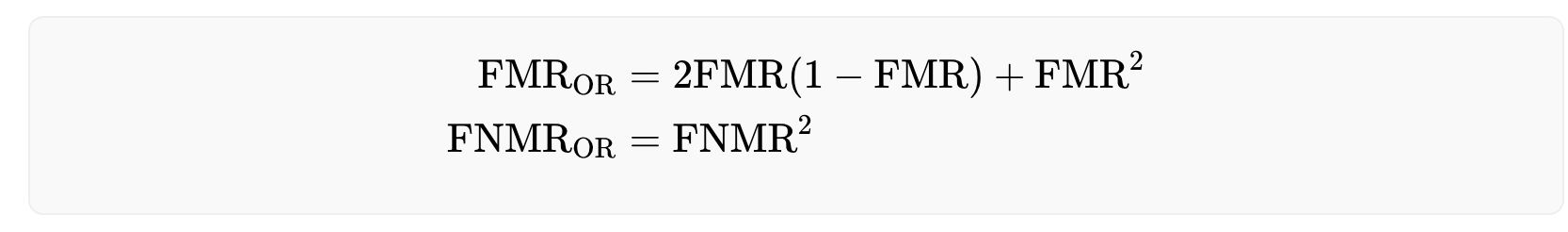

Effective Dual Eye Performance

The values mentioned above pertain to single eye performance, which is determined by evaluating a collection of genuine and imposter iris pairs. However, utilizing both eyes can significantly enhance the performance of a biometric system. There are various methods for combining information from both eyes, and to evaluate their performance, consider two extreme cases:

- The AND-rule, in which a user is deemed to match only if their irises match on both eyes.

- The OR-rule, in which a user is considered a match if their iris on one eye matches that of another user's iris on the same eye.

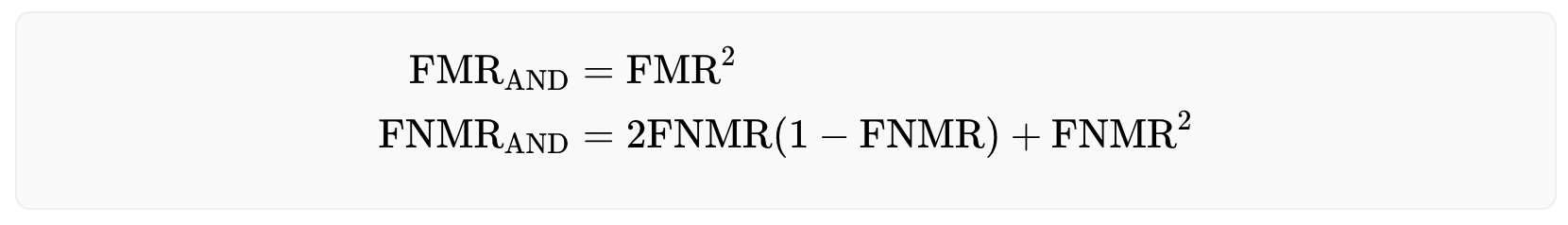

The OR-rule offers a safer approach as it requires only a single iris match to identify a registered user, thus minimizing the risk of falsely accepting the same person twice. Formally, the OR-rule reduces the false non-match rate while increasing the false match rate. However, as the number of registered users increases over time, this strategy may make it increasingly difficult for legitimate users to enroll to the system due to the high false match rate. The effective rates are given below:

On the other hand, the AND-rule allows for a larger user base, but comes at the cost of less security, as the false match rate decreases and the false non-match rate increases. The performance rates for this approach are as follows:

False Matches

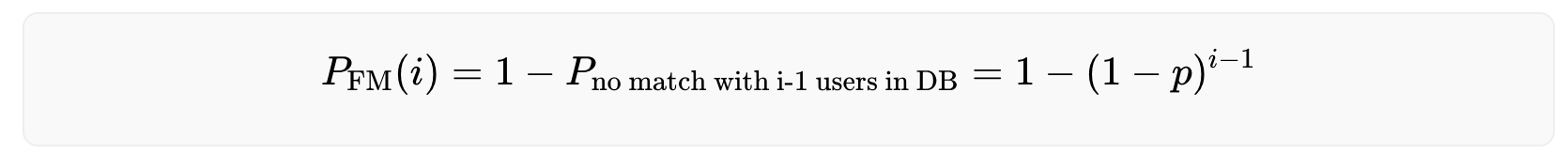

The probability for the i-th (legitimate) user to run into a false match error can be calculated by the equation

with being the false match rate. Adding up these numbers yields the expected number of false matches that have happened after the i-th user has enrolled, i.e the number of falsely rejected users (derivation).

A high false match rate significantly impacts the usability of the system, as the probability of false matches increases with a growing number of users in the database. Over time the probability of being (falsely) rejected as a new user converges to 100%, making it nearly impossible for new users to be accepted.

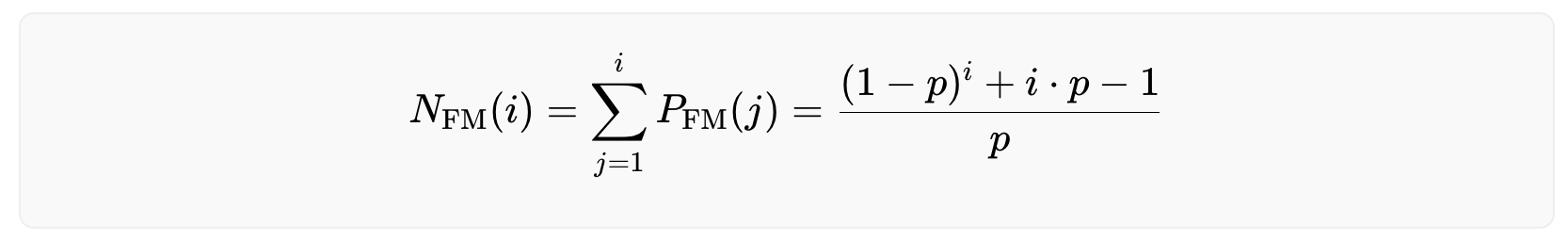

The following graph illustrates the performance of the biometric system using both the OR and AND rule. The graph is separated into two sections, with the left side representing the OR rule and the right side representing the AND rule. The top row of plots in the graph shows the probability of the i-th user being falsely rejected, and the bottom row of plots shows the expected number of users that have been falsely rejected after the i-th user has successfully enrolled. The different colors in the graph correspond to the three systems mentioned earlier: green represents Daugman’s system, blue represents NEC’s system, and red represents the initial worst case estimate.

The main findings from the analysis indicate that when using the OR-rule, the system's effectiveness breaks down with just a few million users, as the chance of a new user being falsely rejected becomes increasingly likely. In comparison, operating with the AND-rule provides a more sustainable solution for a growing user base.

Further, even the difference between the worst case and the best case estimate of current technology matters. The performance of biometric algorithms designed by Tools for Humanity has been continuously improving due to ongoing research efforts. This has been achieved by pushing beyond the state-of-the-art by replacing various components of the uniqueness verification process with deep learning models which also significantly improves the robustness to real world edge cases. At the time of writing, the algorithm's performance closely resembled the green graph depicted in the figure above when in an uncontrolled environment (depending on the exact choice of the FNMR). This is an accomplishment noteworthy in and of itself. Nonetheless, further improvements in the algorithm's performance are expected through ongoing research efforts. The optimum case is a vanishing error rate in practice on a global scale.

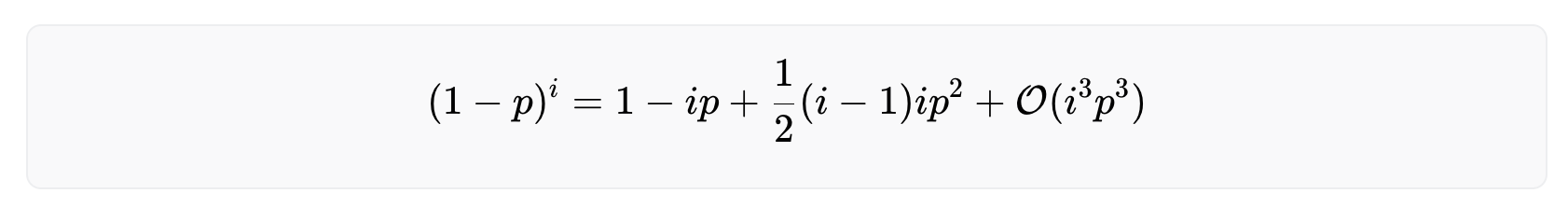

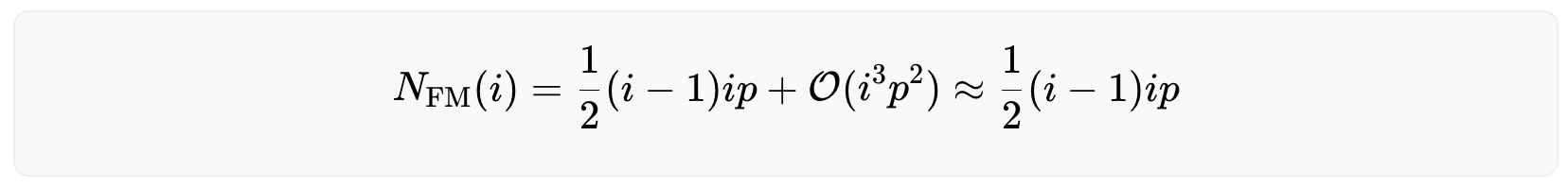

Note that for a large number of users (i≫1) and a very performant biometric system (p≪1) the equation above becomes numerically unstable. To calculate the number of rejected users for such a scenario, Taylor expand the critical part of the equation around small values of p.

The derivation of the above equation can be found here. Inserting this in the equation above yields

which is a valid approximation as long as

False Non Matches

When it comes to fraudulent users, the probability of them not being matched stays constant and does not increase with the number of users in the system. This is because there is only one other iris that can cause a false non-match - the user's own iris from their previous enrollment. Thus, the probability of encountering a false non-match is given by

The number of expected false non matches can be calculated with

with j indicating the j-th untrustworthy user who tries to fool the system.

Conclusion

The conclusion is that iris recognition can establish uniqueness on a global scale. Further, to onboard billions of individuals, the algorithm needs to use the AND-rule. Otherwise, the rejection rate will be too high and it will be practically impossible to onboard billions of users.

The current performance is already beyond the original conservative estimate and the project expects the system to eventually surpass current state-of-the-art lab environment performance, even if subject to an uncontrolled environment: On the one hand, the custom hardware comprises an imaging system that outperforms typical iris scanners by more than an order of magnitude in terms of image resolution. On the other hand, current advances in deep learning and computer vision offer promising directions towards a “deep feature generator” - a feature generation algorithm that does not rely on handcrafted rules but learns from data. So far the field of iris recognition has not yet leveraged this new technology.

Iris Feature Generation with Gabor Wavelets

The objective for iris feature generation algorithms is to generate the most discriminative features from iris images while reducing the dimensionality of data by removing unrelated or redundant data. Unlike 2D face images that are mostly defined by edges and shapes, iris images present rich and complex texture with repeating (semi-periodic) patterns of local variations in image intensity. In other words, iris images contain strong signals in both spatial and frequency domains and should be analyzed in both. Examples of iris images can be found on John Daugman's website.

Gabor filtering

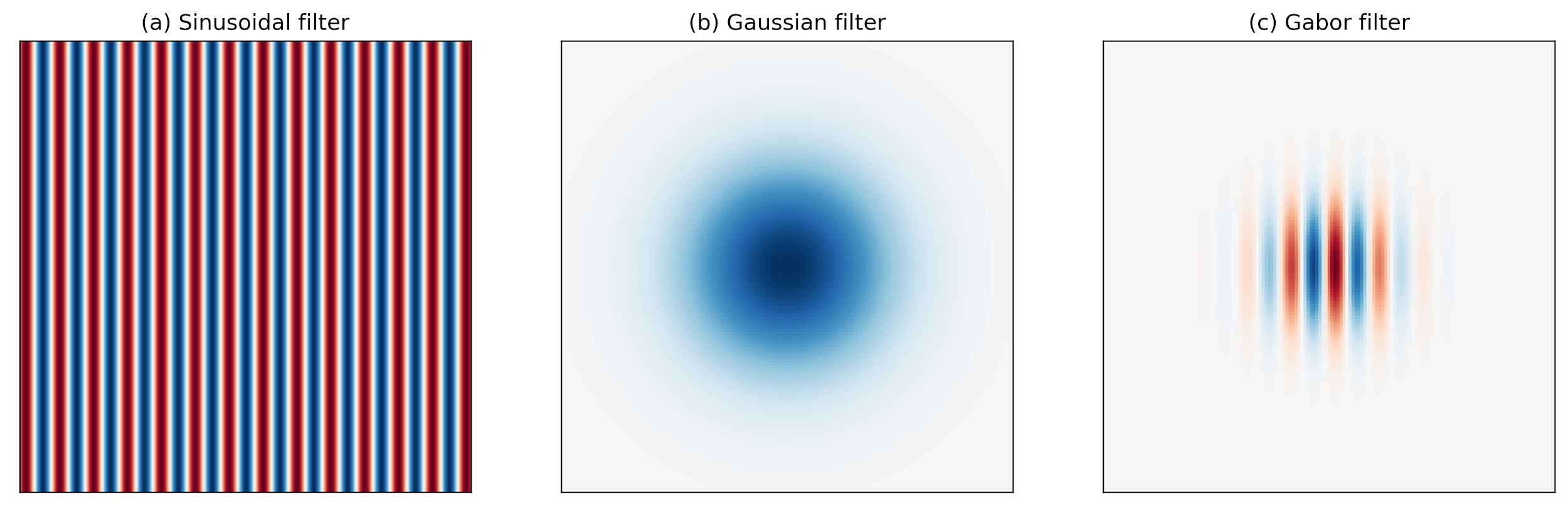

Research has shown that the localized frequency and orientation representation of Gabor filters is very similar to the human visual cortex’s representation and discrimination of texture. A Gabor filter analyzes a specific frequency content at a specific direction in a local region of an image. It has been widely used in signal and image processing for its optimal joint compactness in spatial and frequency domain.

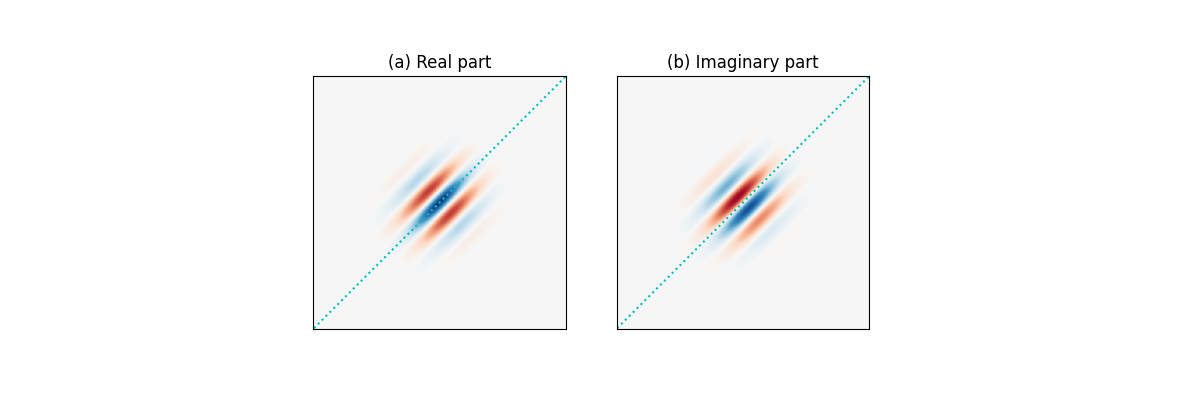

As shown above, a Gabor filter can be viewed as a sinusoidal signal of particular frequency and orientation modulated by a Gaussian wave. Mathematically, it can be defined as

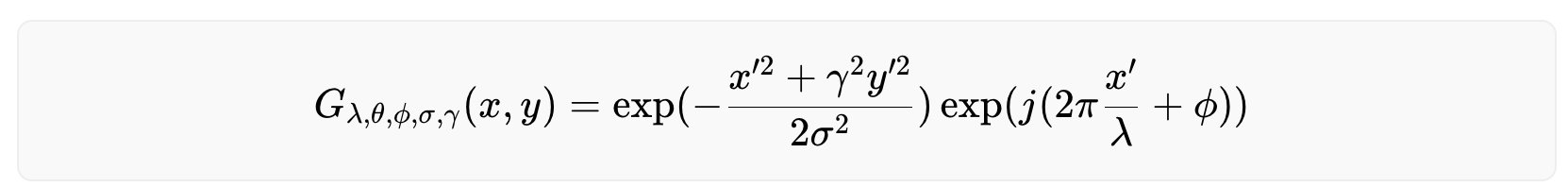

with

Among the parameters, σ and γ represent the standard deviation and the spatial aspect ratio of the Gaussian envelope, respectively, λ and ϕ are the wavelength and phase offset of the sinusoidal factor, respectively, and θ is the orientation of the Gabor function. Depending on its tuning, a Gabor filter can resolve pixel dependencies best described by narrow spectral bands. At the same time, its spatial compactness accommodates spatial irregularities.

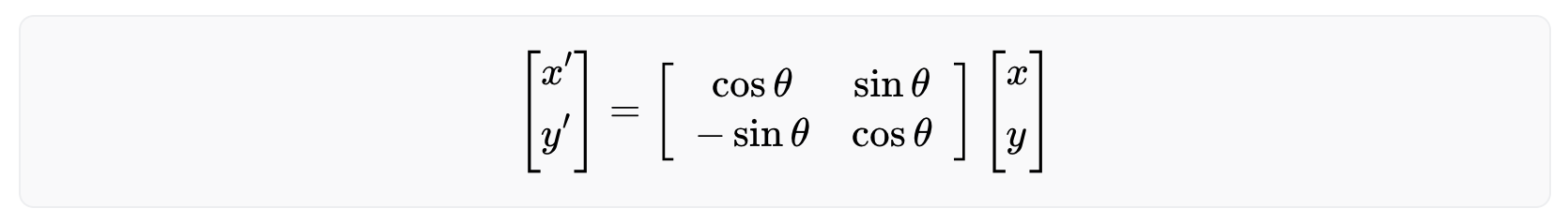

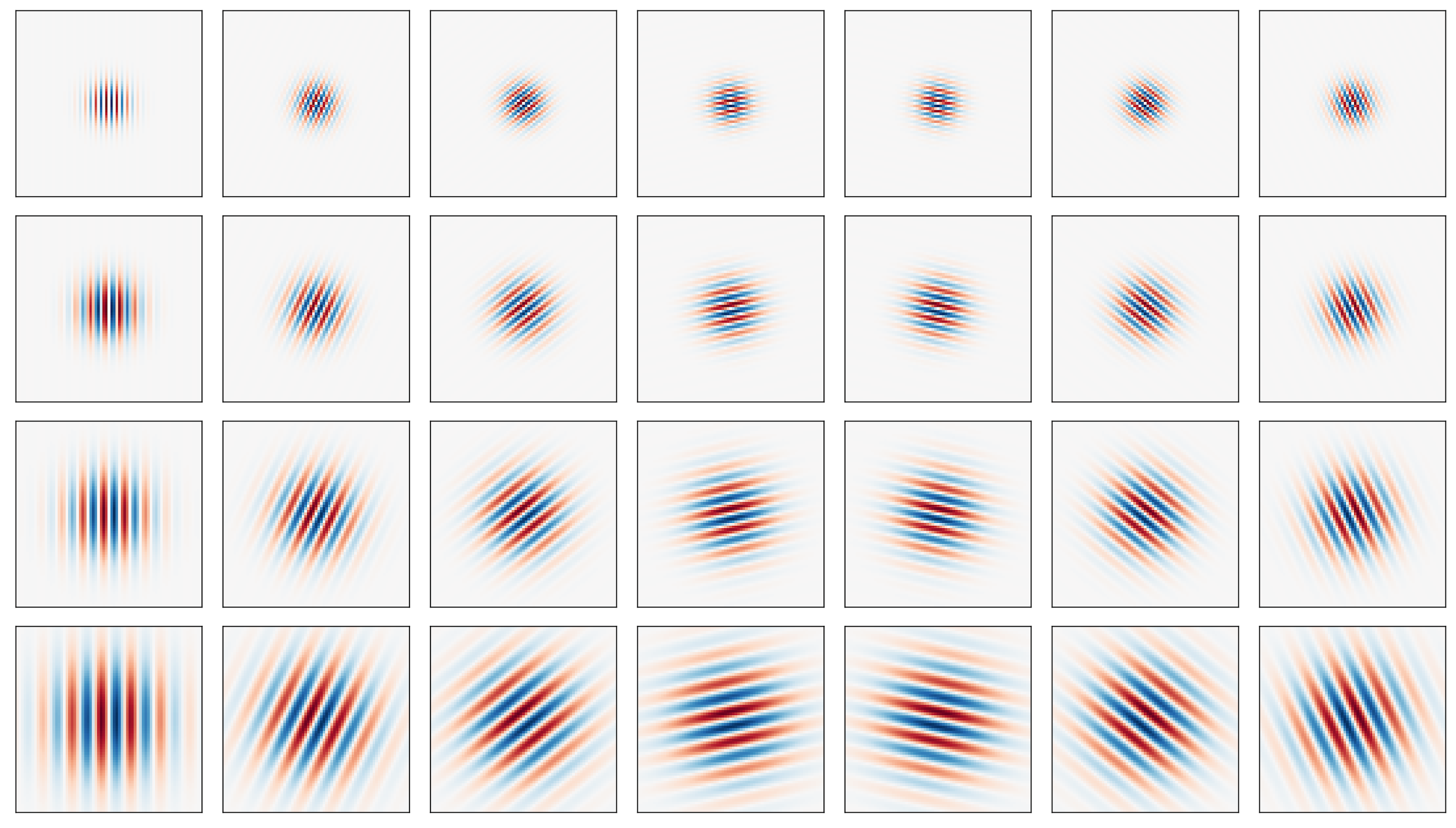

The following figure shows a series of Gabor filters at a 45 degree angle in increasing spectral selectivity. While the leftmost Gabor wavelet resembles a Gaussian, the rightmost Gabor wavelet follows a harmonic function and selects a very narrow band from the spectrum. Best for iris feature generation are the ones in the middle between the two extremes.

Because a Gabor filter is a complex filter, the real and imaginary parts act as two filters in quadrature. More specifically, as shown in the figures below, (a) the real part is even-symmetric and will give a strong response to features such as lines; while (b) the imaginary part is odd-symmetric and will give a strong response to features such as edges. It is important to maintain a zero DC component in the even-symmetric filter (the odd-symmetric filter already has zero DC). This ensures zero filter response on a constant region of an image regardless of the image intensity.

Multi-scale Gabor filtering

Like most textures, iris texture lives on multiple scales (controlled by ). It is therefore natural to represent it using filters of multiple sizes. Many such multi-scale filter systems follow the wavelet building principle, that is, the kernels (filters) in each layer are scaled versions of the kernels in the previous layer, and, in turn, scaled versions of a mother wavelet. This eliminates redundancy and leads to a more compact representation. Gabor wavelets can further be tuned by orientations, specified by . The figure below shows the real part of 28 Gabor wavelets with four scales and 7 orientations.

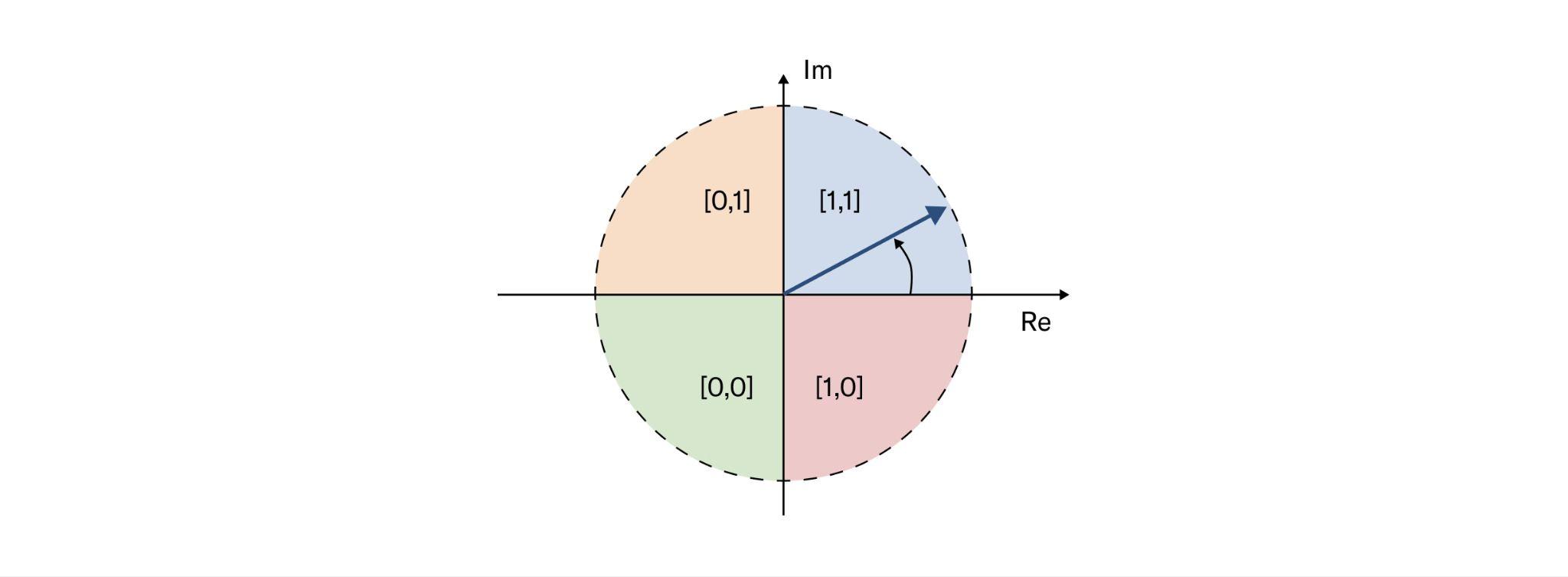

Phase-quadrant demodulation and encoding

After a Gabor filter is applied to an iris image, the filter response at each analyzed region is then demodulated to generate its phase information. This process is illustrated in the figure below, as it identifies in which quadrant of the complex plane each filter response is projected to. Note that only phase information is recorded because it is more robust than the magnitude, which can be contaminated by extraneous factors such as illumination, imaging contrast, and camera gain.

Another desirable feature of the phase-quadrant demodulation is that it produces a cyclic code. Unlike a binary code in which two bits may change, making some errors arbitrarily more costly than others, a cyclic code only allows a single bit change in rotation between any adjacent phase quadrants. Importantly, when a response falls very closely to the boundary between adjacent quadrants, its resulting code is considered a fragile bit. These fragile bits are usually less stable and could flip values due to changes in illumination, blurring or noise. There are many methods to deal with fragile bits, and one such method could be to assign them lower weights during matching.

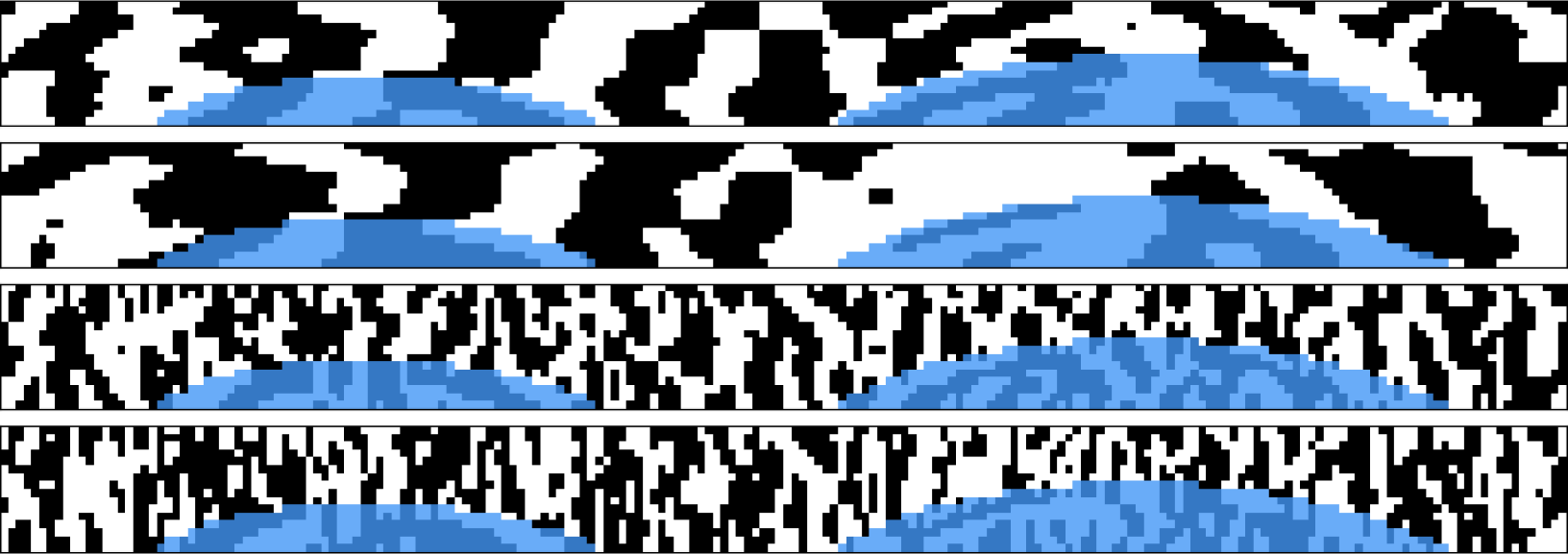

When multi-scale Gabor filtering is applied to a given iris image, multiple iris codes are produced accordingly and concatenated to form the final iris template. Depending on the number of filters and their stride factors, an iris template can be several orders of magnitude smaller than the original iris image.

Robustness of iris codes

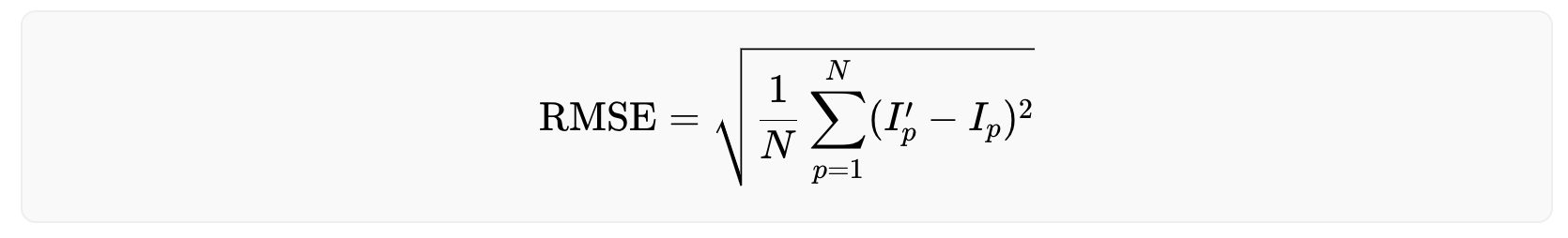

Because iris codes are generated based on the phase responses from Gabor filtering, they are rather robust against illumination, blurring and noise. To measure this quantitatively, each effect is added, namely, illumination (gamma correction), blurring (Gaussian filtering), and Gaussian noise to an iris image, respectively, in slow progression and measure the drift of the iris code. The amount of added effect is measured by the Root Mean Square Error (RMSE) of pixel values between the modified and original image, and the amount of drift is measured by the Hamming distance between the new and original iris code. Mathematically, RMSE is defined as:

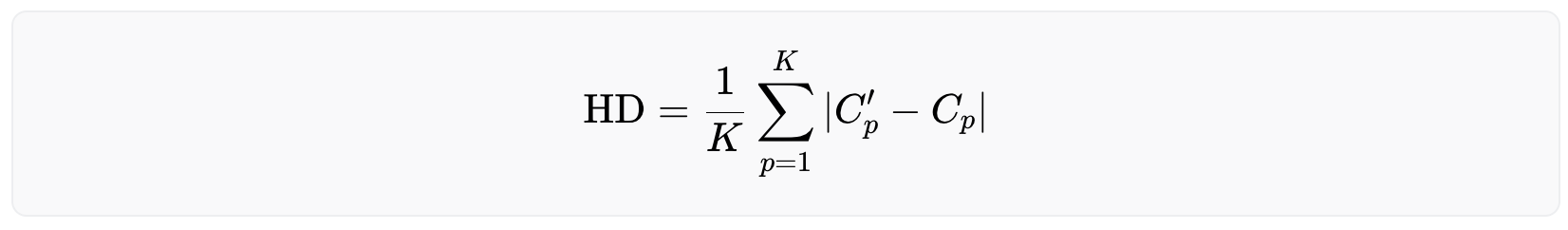

where N is the number of pixels in the original image I and the modified image I′. The Hamming distance is defined as:

where K is the number of bits (0/1) in the original iris code C and the new iris code C′. A Hamming distance of 0 means a perfect match, while 1 means the iris codes are completely opposite. The Hamming distance between two randomly generated iris codes is around 0.5.

The following figures help explain the impact of illumination both visually and quantitatively, blurring and noise on the robustness of iris codes. For illustration purposes, these results are not generated with the actual filters that are deployed but nevertheless demonstrate the property in general of Gabor filtering. Also, the iris image has been normalized from a donut shape in the cartesian coordinates to a fixed-size rectangular shape in the polar coordinates. This step is necessary to standardize the format, mask-out occlusion and enhance the iris texture.

As shown in the figure below, iris codes are very robust against grey-level transformations associated with illumination as the HD barely changes with increasing RMSE. This is because increasing the brightness of pixels reduces the dynamic range of pixel values, but barely affects the frequency or spatial properties of the iris texture.

Blurring, on the other hand, reduces image contrast and could lead to compromised iris texture. However, as shown below, iris codes remain relatively robust even when strong blurring makes iris texture indiscernible to naked eyes. This is because the phase information from Gabor filtering captures the location and presence of texture rather than its strength. As long as the frequency or spatial property of the iris texture is present, though severely weakened, the iris codes remain stable. Note that blurring compromises high frequency iris texture, therefore, impacting high frequency Gabor filters more, which is why a bank of multi-scale Gabor filters are used.

Finally, observe bigger changes in iris codes when Gaussian noise is added, as both spatial and frequency components of the texture are polluted and more bits become fragile. When the iris texture is overwhelmed with noise and becomes indiscernible, the drift in iris codes is still small with a Hamming distance below 0.2, compared to matching two random iris codes (≈0.5). This demonstrates the effectiveness of iris feature generation using Gabor filters even in the presence of noise.

Conclusion

Iris feature generation is a necessary and important step in iris recognition. It reduces the dimensionality of the iris representation from a high resolution image to a much lower dimensional binary code, while preserving the most discriminative texture features using a bank of Gabor filters. It is worth noting that Gabor filters have their own limitations, for example, one cannot design Gabor filters with arbitrarily wide bandwidth while maintaining a near-zero DC component in the even-symmetric filter. This limitation can be overcome by using the Log Gabor filters. In addition, Gabor filters are not necessarily optimized for iris texture, and machine-learned iris-domain specific filters (e.g. BSIF) have the potential to achieve further improvements in feature generation and recognition performance in general. Moreover, the project’s contributors are investigating novel approaches to leverage higher quality images and the latest advances in the field of deep metric learning and deep representation learning to push the accuracy of the system beyond the state-of-the-art to make the system as inclusive as possible.

As the resilience of iris feature generation amidst external factors was showcased, it is crucial to note that even minor fluctuations in iris code variability hold significant importance when dealing with a billion people, as the tail-end of the distribution dictates the error rates, thus influencing the number of false rejections.

Iris Inference System

Building upon the theoretical foundation established in the previous sections, this section now focuses on the practical application of these principles within the Worldcoin project. Having explored the scalability of iris recognition technology and the process of feature generation using Gabor wavelets, this section explains the details of the image processing. By the end of this section, one will have a thorough understanding of how Worldcoin's iris recognition algorithm functions to ensure accurate and scalable verification of an individual's uniqueness.

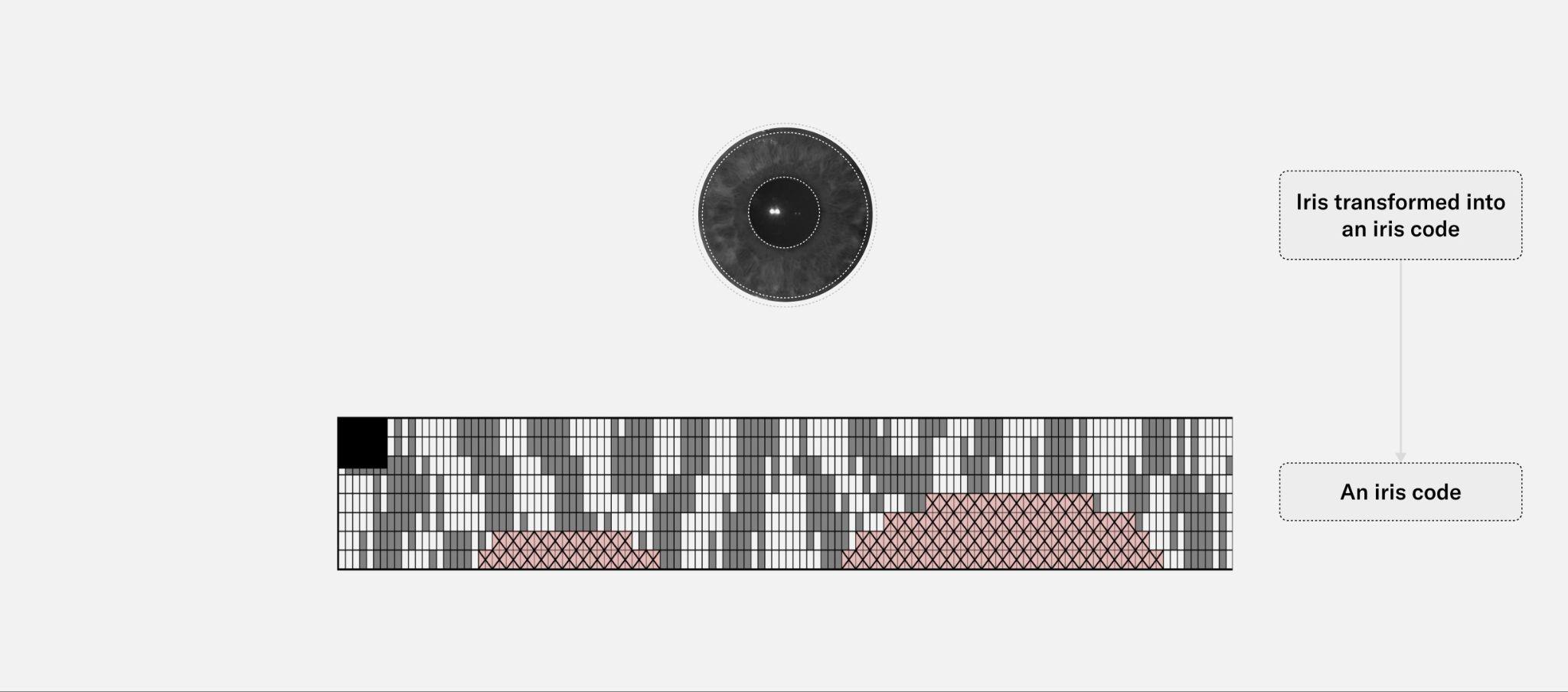

Pipeline overview

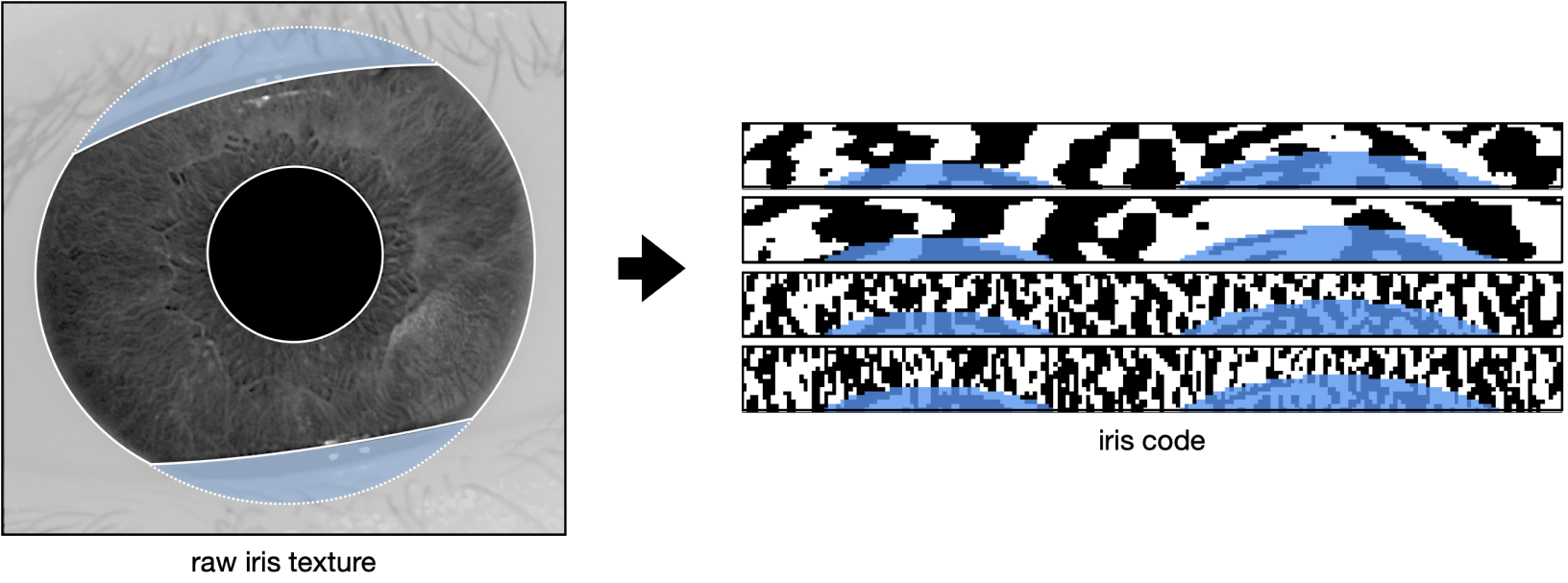

The objective of this pipeline is to convert high-resolution infrared images of a human's left and right eye into an iris code: a condensed mathematical and abstract representation of the iris' entropy that can be used for verification of uniqueness at scale. Iris codes have been introduced by John Daugman in this paper and remain to this day the most widely used way to abstract iris texture in the iris recognition field. Like most state-of-the-art iris recognition pipelines, Worldcoin’s pipeline is composed of four main segments: segmentation, normalization, feature generation and matching.

Refer to the image below for an example of a high resolution image of the iris acquired in the near infrared spectrum. The right hand side of the image shows the corresponding iris code, which is itself composed of response maps to two 2D Gabor wavelets. These response maps are quantized in two bits so that the final iris code has dimensions of , with and being the number of radial and angular positions where these filters are applied. For more details, see check the previous section. While only the iris code of one eye is shown below, note that an iris template consists of the iris codes from both eyes.

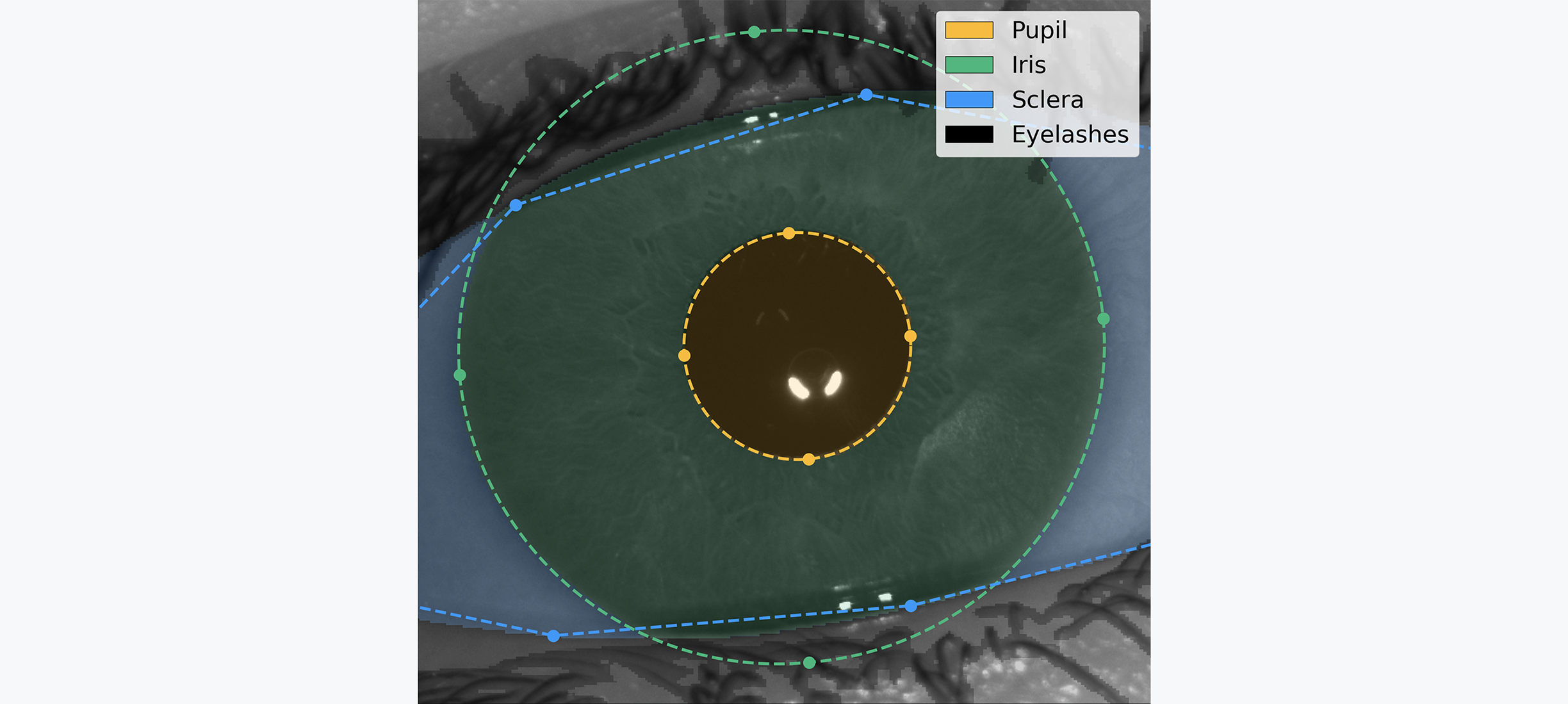

The purpose of the segmentation step is to understand the geometry of the input image. The location of the iris, pupil, and sclera are determined, as well as the dilation of the pupil and presence of eyelashes or hair covering the iris texture. The segmentation model classifies every pixel of the image as pupil, iris, sclera, eyelash, etc. These labels are then post-processed to understand the geometry of the subject's eye.

The image and its geometry then passes through tight quality assurance. Only sharp images where enough iris texture is visible are considered valid, because the quantity and quality of available bits in the final iris codes directly impact the system's overall performance.

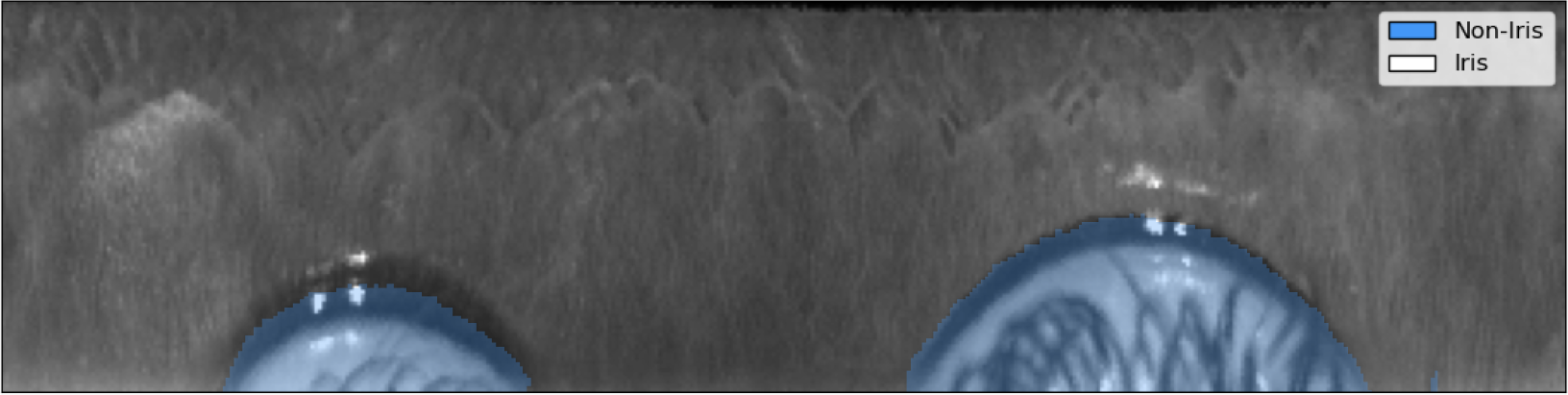

Once the image is segmented and validated, the normalization step takes all the pixels relevant to the iris texture and unfolds them into a stable cartesian (rectangular) representation.

The normalized image is then converted into an iris code during the feature generation step. During this process, a Gabor wavelet kernel convolves across the image, converting the iris texture into a standardized iris code. For every point in a grid overlapping the image, two bits that represent the sign of the real and complex components of the filter response are derived, respectively. This process synthesizes a unique representation of the iris texture, which can easily be compared with others by using the Hamming distance metric. This metric quantifies the proportion of bits that differ between any two compared iris codes.

The following sections will explain each of the aforementioned steps in more detail, by following the journey of an example iris image through the biometric pipeline. This image was taken by the Orb, during a signup in the TFH lab. It is shared with user consent and faithfully represents what the camera sees during a live uniqueness verification.

The eye is a remarkable system that exhibits various dynamic behaviors, including blinking, squinting, closing, as well as the ability of the pupil to dilate or constrict and the eyelashes or any object to cover the iris. The following section also explores how the biometric pipeline can be robust in the presence of such natural variability.

Segmentation

Iris recognition was first developed in 1993 by John Daugmann and, although the field has advanced since the turn of the millennium, it continues to be heavily influenced by legacy methods and practices. Historically, the morphology of the eye in iris recognition has been identified using classical computer vision methods such as the Hough Transform or circle fitting. In recent years, Deep Learning has brought about significant improvements in the field of computer vision, providing new tools for understanding and analyzing the eye physiology with unprecedented depth.

Novel methods for segmenting high-resolution infrared iris images are proposed in Lazarski et al by the Tools for Humanity team. The architecture consists of an encoder that is shared by two decoders: one that estimates the geometry of the eye (pupil, iris, and eyeball) and the other that focuses on noise, i.e., non-eye-related elements that overlay the geometry and potentially obscure the iris texture (eyelashes, hair strands, etc.). This dichotomy allows for easy processing of overlapping elements and provides a high degree of flexibility in training these detectors. The architecture takes into account the DeepLabv3+ architecture with a MobileNet v2 backbone.

Acquiring labels for noise elements is significantly more time-consuming than acquiring labels for geometry, as it requires a high level of precision for identifying intertwined eyelashes. It takes 20 to 80 minutes to label eyelashes in a single image, depending on the levels of blur and the subject's physiology, while it only takes about 4 minutes to label the geometry to required levels of precision. For that reason, noise objects (e.g. eyelashes) are decoupled from geometry objects (pupil, iris and sclera) which allows for significant financial and time savings combined with a quality gain.

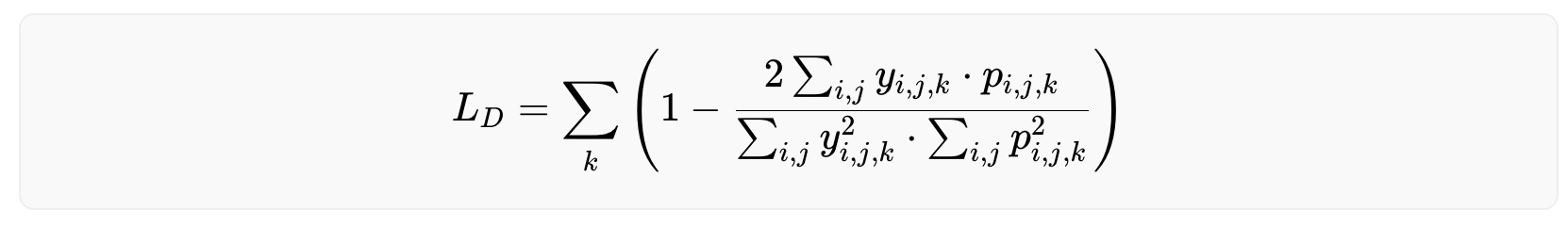

The model was trained over a mix of Dice Loss and Boundary Loss. The Dice loss can be expressed as

with being the one-hot encoded ground truth and the model’s output for the pixel (i,j) as a probability. The third index k represents the class (e.g. pupil, iris, eyeball, eyelash or background). The Dice loss essentially measures the similarity between two sets, i.e. the label and the model's prediction.

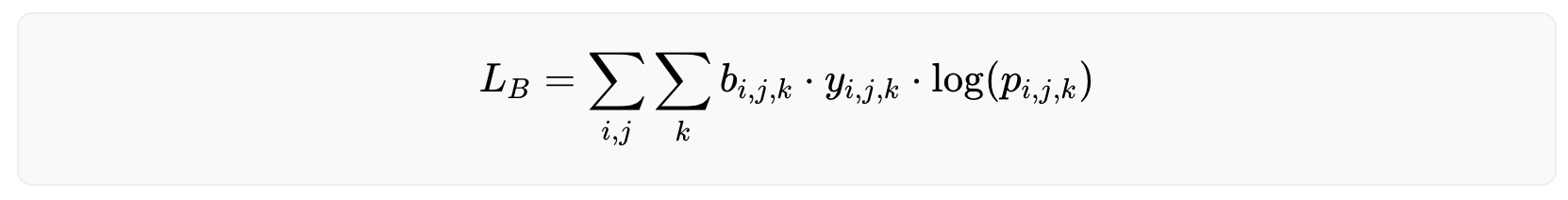

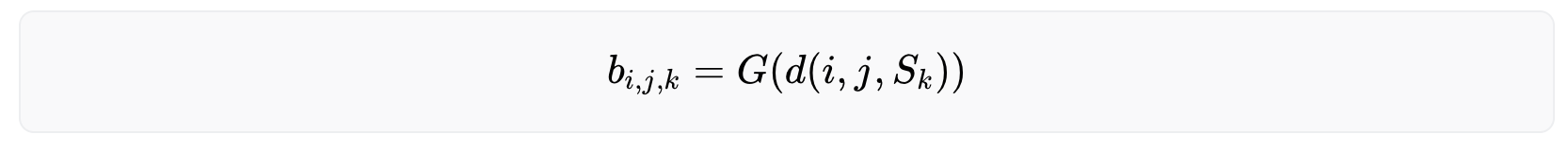

Accurate identification of the boundaries of the iris is essential for successful iris recognition, as even a small warp in the boundary can result in a warp of the normalized image along the radial direction. To address this, a weighted cross-entropy loss was also introduced that focuses on the zone at the boundary between classes, in order to encourage sharper boundaries. It is mathematically represented as:

with the same notations as before and being the boundary weight, which represents how close the pixel (i,j) is to the boundary between class k and any other class. A Gaussian blur is then applied to the contour to prioritize the precision of the model on the exact boundary while keeping a lower degree of focus on the general area around it.

With being the distance between the point and the surface as the minimum of the euclidean distances between (i,j) and all points of . is the boundary between class and all other classes, the Gaussian distribution centered at 0 with some finite variance.

Experiments were conducted with other loss functions (e.g. convex prior), architectures (e.g. single-headed model), and backbones (e.g. ResNet-101) and this setup was found to have the best performance in terms of accuracy and speed. The following graph shows the iris image overlayed by the segmentation maps as predicted by the model. In addition, landmarks are displayed calculated by a separate quality assessment AI model during the image capture phase. This model produces quality metrics to ensure that only high-quality images are used in the segmentation phase and that the iris code is generated accurately for verification of uniqueness: sharp image focused on the iris texture, well-opened eye gazing in the camera, etc.

Normalization

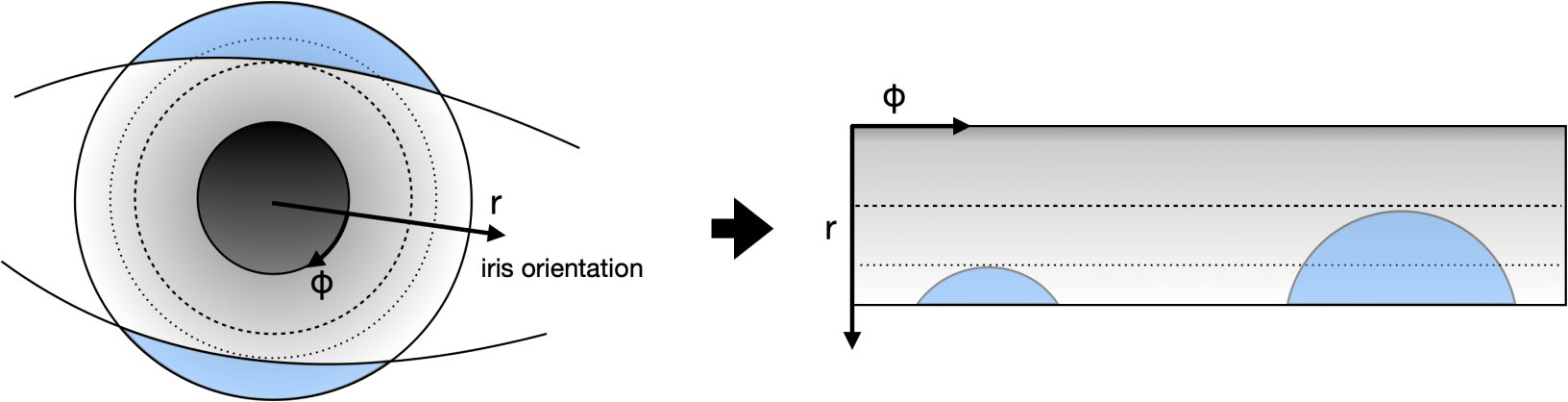

The goal of this step is to separate meaningful iris texture from the rest of the image (skin, eyelashes, sclera, etc.). To achieve this, the iris texture is projected from its original cartesian coordinate system to a polar coordinate system, as illustrated in the following image. The iris orientation is defined as the vector pointing from one pupil center to the other pupil center of the opposite eye.

This process reduces variability in the image by canceling out variations such as the person’s distance from the camera, the pupil constriction or dilation due to the amount of light in the environment, and the rotation of the person’s head. The image below illustrates the normalized version of the iris above. The two arcs of circles visible in the image are the eyelids, which were distorted from their original shape during the normalization process.

Feature generation

Now that a stable, normalized iris texture is produced, an iris code can be coded that can be matched at scale. In short, various Gabor filters stride across the image and threshold its complex-valued response to generate two bits representing the existence of a line (resp. edge) at every selected point of the image. This technique, pioneered by John Daugmann, and the subsequent iterations proposed by the iris recognition research community, remains state-of-the-art in the field.

Matching

Now that the iris texture is transformed into an iris code, it is ready to be matched against other iris codes. To do so, a masked fractional Hamming Distance (HD) was used: the proportion of non-masked iris code bits that have the same value in both iris codes.

Due to the parametrization of the Gabor wavelets, the value of each bit is equally likely to be 0 or 1. As the iris codes described above are made of more than 10,000 bits, two iris codes from different subjects will have an average Hamming distance of 0.5, with most (99.95%) iris codes deviating less than 0.05 HD away from this value (99.9994% deviating less than 0.07 HD). As several rotations of the iris code are compared to find the combination with highest matching probability, this average of 0.5 HD moves to 0.45 HD, with a probability of being lower than 0.38 HD.

It is therefore an extreme statistical anomaly to see two different eyes producing iris codes with a distance lower than 0.38 HD. On the contrary, two images captured of the same eye will produce iris codes with a distance generally below 0.3 HD. Applying a threshold in between allows the ability to reliably distinguish between identical and different identities.

To validate the quality of the algorithms at scale, their performance was evaluated by collecting 2.5 million pairs of high-resolution infrared iris images from 303 different subjects. These subjects represent diversity across a range of characteristics, including eye color, skin tone, ethnicity, age, presence of makeup and eye disease or defects. Note that this data was not collected during field operations but stems from contributors to the Worldcoin project and from paid participants in a dedicated session organized by a respected partner. Using these images and their corresponding ground truth identities, the false match rate (FMR) and false non match rate (FNMR) of the system was measured.

From 2.5 million image pairs, all were correctly classified as either a match or non-match. Additionally, the margin between the match and non-match distributions is wide, providing a comfortable margin of error to accommodate for potential outliers.

The match distribution presents two clear peaks, or maxima. The peak on the left (HD≈0.08) corresponds to the median Hamming distance for pairs of images taken from the same person during the same capture process. This means that they are extremely similar, as one would expect from two images of the same person. The peak on the right (HD≈0.2) represents the median Hamming distance for pairs of images taken from the same person but during different enrollment processes, often weeks apart. These are less similar, reflecting the naturally occurring variations in the same person's images taken at different times like pupil dilation, occlusion and eyelashes. Systems to narrow the matches distribution are continuously being iterated on: better auto-focus and AI-Hardware interactions, better real-time quality filters, Deep Learning feature generation, image noise reduction, etc.

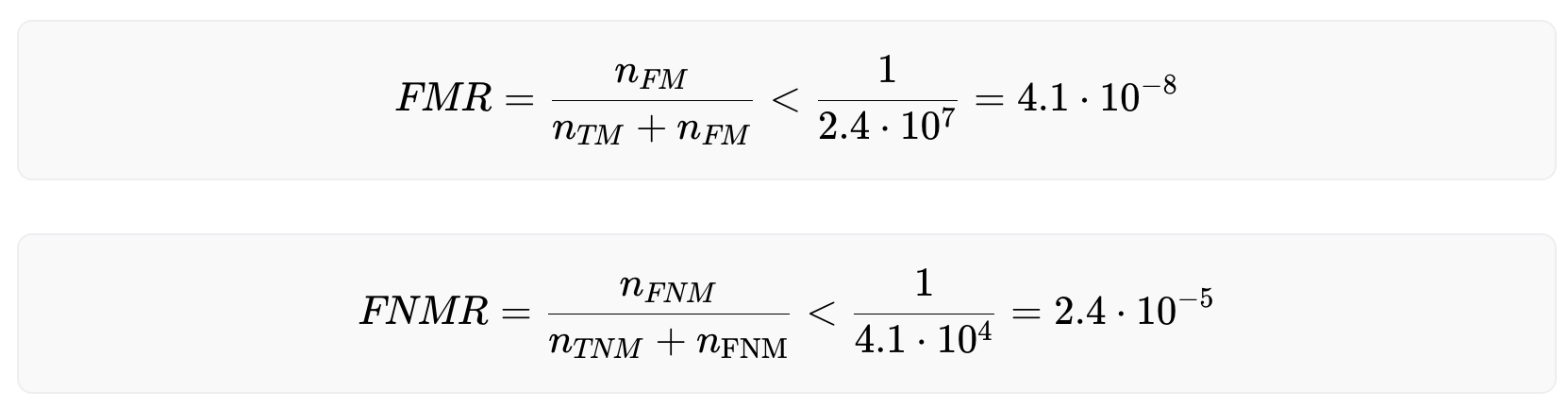

As there were no misclassified iris pairs, FMR and FNMR cannot be calculated exactly. However, an upper bound for both rates can be estimated:

With these numbers, uniqueness on a billion people scale can be verified with very high accuracy. However, also acknowledged is the fact that the dataset used for this evaluation could be enlarged and more effort is needed to build larger and even more diverse datasets to more accurately estimate the biometric performance.

Conclusions

In this section, the key components of Worldcoin’s uniqueness verification pipeline are presented. It illustrated how the use of a combination of deep learning models for image quality assessment and image understanding, in conjunction with traditional feature generation techniques, enables accurate verification of uniqueness on a global scale.

However, work in this area is ongoing. Currently, the team at TFH is researching an end-to-end Deep Learning model, which could yield faster and even more accurate uniqueness verification.

Iris Code Upgrades

While the accuracy of the uniqueness verification algorithm of the Orb is already very high (with, specifically a false match rate of 1 in 40 trillion (1:1 match), an even higher accuracy would be beneficial on a billion people scale. To this end, the biometric algorithm is continuously being developed and will be upgraded over time.

There are three key types of upgrades that can increase accuracy further:

Image preprocessing upgrades.

These upgrades, which are backwards compatible, modify everything except the final step in the process: iris code feature generation. Elements such as the segmentation network and image quality thresholds are typical areas of improvement. For an in-depth look at the preprocessing algorithms, please refer to the image processing section. These types of upgrades generally occur multiple times a year.

Iris code generation upgrades for future verifications. Also backwards compatible, these upgrades involve modifying the iris code feature generation algorithm without recomputing previous iris codes. Such an upgrade involves the introduction of v2 codes, which are not compatible with the older v1 codes. Both v1 and v2 codes would be compared against their respective sets. If both comparisons result in no collision, the v2 code is added to the set of v2 codes. This way, the set of v1 codes doesn’t grow any more, yet none of the individuals who are part of the v1 set can get a second World ID.

In the event of an upgrade to the feature generation algorithm, the corresponding false match rate evolve as follows:

For an in-depth understanding of the error rates, please revisit the relevant information in the section biometric performance on a billion people scale. From the above equation we can deduce that the likelihood of the i-th legitimate user experiencing a false match continues to increase with the expansion of the v2 set. However, provided that , the rate of this growth is significantly reduced. For any individual included in the v1 set, the false non-match rate remains unaffected. For new enrollments, the false non-match rate of the v2 algorithm applies.

In principle, several such iris code versions can be stacked. This type of upgrade is expected to happen about once a year.

Recomputing existing iris codes. These upgrades may or may not be backwards compatible, depending on whether the original image is still available. These are expected to occur less frequently than iris code generation upgrades and to become less frequent over time.

To understand when a recomputation might be required, let us define the number of codes in the set of v1 codes as and similarly for v2 codes. If the error rates of the v1 code are much worse than the ones of v2 codes and therefore have major influence on the false match rate even at , the set of v1 codes should eventually be recomputed. For this to be possible, the iris images need to be available. This can happen in several ways:

Re-capture images. Individuals could return to an Orb. Depending on the distance to an Orb and individual preferences this may or may not be a realistic option.

Custodial image storage. Upon request, the issuer can securely store the images and automatically recompute the iris code if necessary. Currently, this is an option for individuals, but it is likely to be discontinued with the introduction of self custodial image storage.

Self custodial image storage. Expected to be introduced in late 2023, this option allows individuals to store their signed and end-to-end encrypted images on their device. For recomputing iris codes, individuals can upload their images temporarily to a dedicated, audited cloud environment that deletes images upon recomputation, or perform the computation locally on their phones. To ensure integrity, the local computation requires the upgrade to happen within a zero-knowledge proof, necessitating the use of Zero-Knowledge Machine Learning (ZKML) on the individual’s phone. The feasibility of this approach depends on the computational capabilities of the individual’s phone and ongoing ZKML research.

If local computation or temporary upload isn't viable or preferable, individuals can always revisit an Orb where the iris code is computed locally.

Biometric Uniqueness Service

While the iris code is computed locally on the Orb, the biometric uniqueness service i.e. the determination of uniqueness based on the iris code is performed on a server since the iris code needs to be compared against all other iris codes of humans who have verified before. This process is getting increasingly computationally intensive over time. Today, the biometric uniqueness service is run by Tools for Humanity. However, this should not be the case forever and there are several ideas regarding the decentralization of this service.

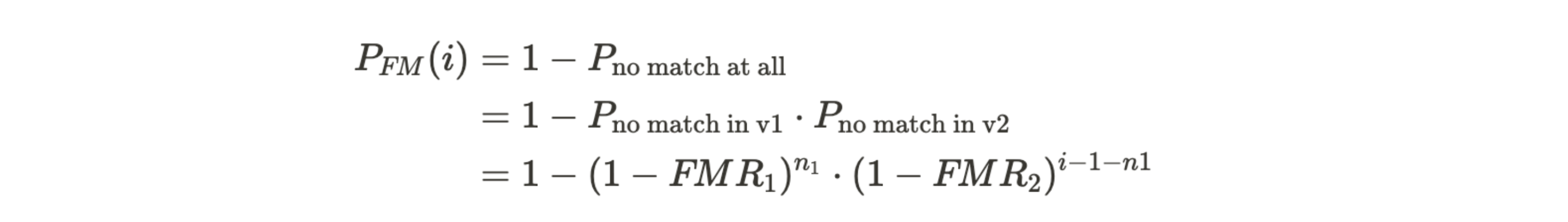

Worldcoin Protocol

Worldcoin is a blockchain-based protocol that consists of both off-chain and on-chain components (smart contracts) and is based on Semaphore from the Ethereum PSE group. The Protocol supports the Worldcoin mission by distinguishing humans from non-human actors online, privately but uniquely identifying individuals to solve certain classes of problems related to abuse, fraud, and spam.

Current Status

The Protocol originally deployed on Polygon during its beta phase, and the current version runs on Ethereum with a highly scalable batching architecture. Bridges are in place for Optimism and Polygon PoS state changes on Ethereum, with each batch insertion being replicated to those chains. As of this writing, over two million users have been successfully enrolled with a combination of these deployments, representing an average load of almost five enrollments per minute.

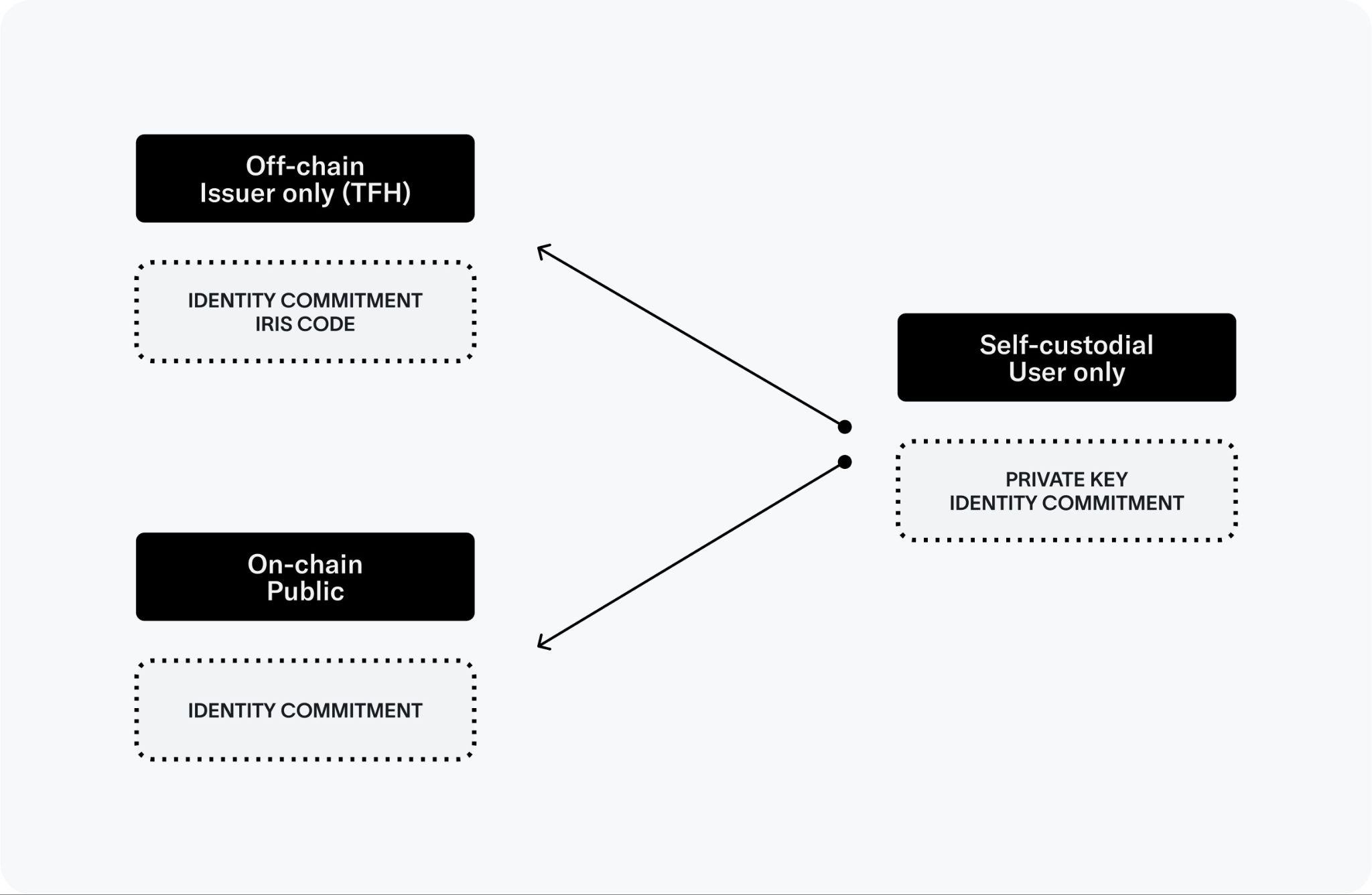

Technical Implementation

While the Orb adheres to data minimization principles such that no raw biometric data (e.g. iris images) needs to leave the device, it calculates and transmits iris codes that are stored and processed separately from the user’s profile data or the user’s wallet address. The first version of the Protocol originated as a solution to this fundamental privacy challenge specifically for the WLD airdrop. At its core, the Protocol combines the Orb-based uniqueness verification with anonymous set-membership proofs, thus allowing the issuer to determine whether the user has claimed their WLD tokens without collecting any further information about them. Realizing this solves a hard problem others are also facing, World ID was created in order to allow third parties to use the Orb-verified “unique human set” in the same privacy-preserving way.

Users start enrollment by creating a Semaphore keypair on their smartphone, hereafter referred to as the World ID keypair. The Orb associates the public key with a user’s iris code, whose current sole purpose is to be used in the uniqueness check. If this check succeeds, the World ID public key gets inserted into an identity set maintained by a smart contract on the Ethereum blockchain. The updated state is subsequently bridged to Optimism and Polygon PoS so World ID can be used natively on those chains. Integration with other EVM-based chains is straightforward, and integration with non-EVM chains is possible as long as the bridged chain has a gas-efficient means of verifying Groth16 proofs. After enrollment the user can prove their inclusion in this identity set, and therefore their unique personhood, to third parties in a trustless and private way. Since the scheme is private, it’s usually necessary to tie this proof to a particular action (e.g. claiming WLD or voting on a proposal).

In the above scheme, the wallet creates a Groth16 proof that proves a user knows the private key to one of the public keys in the on-chain identity set and the action. An optional signal, like the preferred option in a vote, can also be included. By design, this provides strong anonymity of the size of the whole set. It is not possible to learn the public key or anything relating to the enrollment, including the iris code, other than that it was successfully completed, so long as the private key does not leak. It is also not possible to learn that two proofs came from the same person if the scheme is used for different applications.

In the context of the Orb verification, the Orb is the only trusted component in the system; after enrollment, World ID can be used in a permissionless way.

Overall Architecture and User Flow

As mentioned, at the heart of the Protocol is the Semaphore anonymous set-membership protocol — an open-source project originally developed by a team from the Ethereum Foundation and extended by Worldcoin. Semaphore is unique in that it takes the basic cryptographic design for privacy as found in anonymous voting and currencies and offers it as a standalone library. Semaphore stands out in its simplicity: It uses a minimalistic implementation while providing maximum freedom for implementers to design their protocols on top. Semaphore’s straightforward design also allows it to make the adaptations required to support multiple chains and enroll a billion people efficiently. Worldcoin’s version of Semaphore is deployed as a smart contract on Ethereum, with a single set containing one public key (called an identity commitment) for each enrolled user. A commitment to this set is replicated to other chains using state bridges so that corresponding verifier contracts can be deployed there.

Users interact with the Protocol through an identity wallet containing a Semaphore key pair specific to World ID. Semaphore does not use an ordinary elliptic curve key pair, but leverages a digital signature scheme using a ZKP primitive. The private key is a series of random bytes, and the public key is a hash of those bytes. The signature is a ZKP that the private key hashes to the public key. Specifically, the hash function is Poseidon over the BN254 scalar field. The public key is not used outside of the initial enrollment (for interactions with smart contracts, the wallet also contains a standard Ethereum key pair). The user can initiate World ID verifications directly from the app, by scanning a QR code or tapping on a deep link. Upon confirmation, a ZKP is computed on the device and sent through the World ID SDK directly to the requesting party (e.g. a third-party decentralized application, or dApp).

Developers can integrate World ID on-chain using the central verifier contract. As part of any other business logic, the developer can call the verifier to validate a user-provided proof. The developer at a minimum provides an application ID and action (which are used to form the external nullifier). The external nullifier is used to determine the scope of the Sybil resistance, i.e. that a person is unique for each context. Within the zero-knowledge circuit that a user computes to generate a proof with their World ID, the external nullifier is hashed in conjunction with the user’s private key to generate a nullifier hash. The same person may register in multiple contexts but will always produce the same nullifier hash for a specific context. The developer may also provide an optional message (called a signal) which the user will commit to within the ZKP.

If the proof is valid, the developer knows that whoever initiated the transaction is a verified human being. The developer can then enforce uniqueness on the nullifier hash to guarantee sybil resistance.

For example, to implement quadratic voting, one would use a unique identifier for the governance proposal as context and the user’s preferred choice as message. In case of airdrops (just as for WLD), the associated message would be the user’s Ethereum wallet address.

Alternatively, World ID can be used off-chain. On the wallet side, everything remains the same. The difference is that the proof-verification happens on a third-party server. The third-party server still needs to check whether the given set commitment (i.e. Merkle root) corresponds to the on-chain set. This is done using a JSON-RPC request to an Ethereum provider or by relying on an indexing service. All of that is abstracted away by the World ID SDK and additional tooling in order to provide a better developer experience.

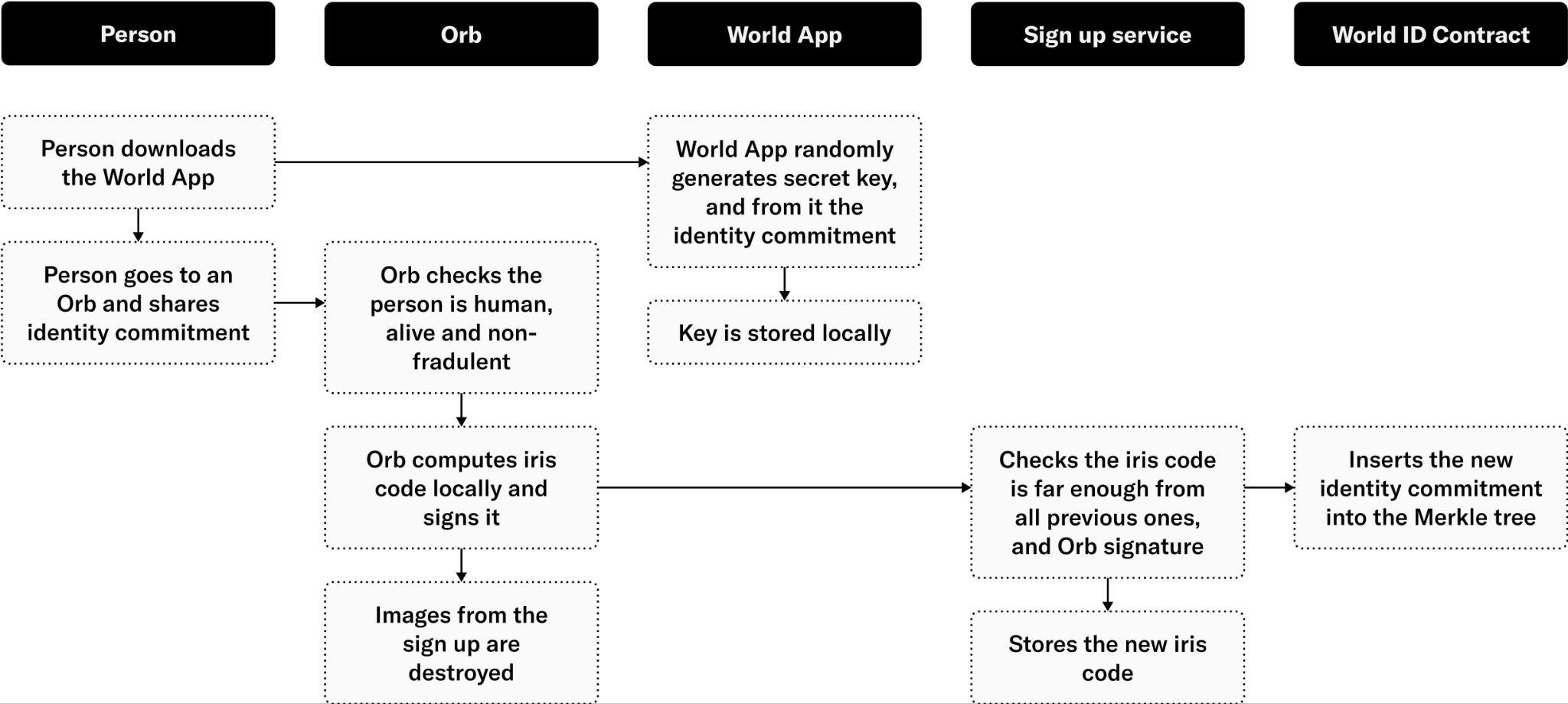

Enrollment Process

This section outlines how the enrollment process works for generating a World ID and verifying at an Orb.

The Semaphore protocol provides World ID with anonymity, but by itself it does not satisfy Worldcoin’s scaling requirements. A regular insertion takes about a million gas (a unit of transaction cost in Ethereum). Gas prices fluctuate heavily on Ethereum, but this transaction could easily cost over $100 in today’s fee market, making it prohibitively expensive to sign up billions of people.

One could use cheaper alternatives to Ethereum, but that comes at the cost of security and adoption; Ethereum has the largest app ecosystem and Worldcoin aims for World ID to be maximally useful. For that, it is best to start from Ethereum and build out from there. However, from a cost perspective, there are limits to scaling atop Ethereum, as the large insertion operation still happens on-chain. The most viable options are optimistic rollups, but these require considerable L1 calldata. Therefore, Worldcoin scaled Semaphore using a zk-rollup style approach that uses one-third the amount of L1 calldata of optimistic rollups. The enrollment proceeds as follows (see above diagram):

- The user downloads the World App, which, on first start, generates a World ID keypair. In World App, private keys are optionally backed up (details on this coming soon). Additionally, an Ethereum keypair is also generated.

- To verify their account, the user generates a QR code on the World App and presents it to the Orb. This air-gapped approach ensures the Orb isn’t exposed to any sort of device or network-related information associated with the user’s device.

- The Orb verifies that it sees a human, runs local fraud prevention checks, and takes pictures of both irises. The iris images are converted on the Orb hardware into the iris code. Raw biometric data does not leave the device (unless explicitly approved by the user for training purposes).

- A message containing the user’s identity commitment and iris code is signed with the Orb’s secure element and then sent to the signup service, which queues the message for the uniqueness-check service.

- The uniqueness-check service verifies the message is signed by a trusted Orb and makes sure the iris code is sufficiently distinct from all those seen before using the Hamming distance as distance metric.

- If the iris code is sufficiently distant (based on the Hamming distance calculation), the uniqueness service stores a copy of the iris code to verify uniqueness of future enrollments and then forwards the user’s identity commitment to the signup sequencer.

- The signup sequencer takes the user’s identity commitment and inserts it into a work queue for later processing by the batcher.

- A batcher monitors the work queue. When 1) a sufficiently large number of commitments are queued or 2) the oldest commitment has been queued for too long, the batcher will take a batch of keys from the queue to process.

- The batcher computes the effect of inserting all the keys in the batch to the identity set, the on-chain Semaphore Merkle tree. This results in a sequence of Merkle tree update proofs (essentially a before-and-after inclusion proof). The prover computes a Groth16 proof with initial root, final root, and insertion start index as public inputs. The private inputs are a hash of public keys and the insertion proofs.

- For optimization purposes, the above-mentioned “public inputs” are actually keccak-hashed as a single public input and non-hashed as private inputs, which reduces the on-chain verification cost significantly. The circuit verifies that the initial tree leaves are empty and correctly updated. Computing the proof for a batch size of 1,000 takes around 5 minutes on a single AWS EC2 hpc6a instance.

- The batcher creates a transaction containing the proof, public input, and all the inserted public keys and submits it to a transaction relayer. Relayer assigns appropriate fees, signs the transaction, and submits it to (one or more) blockchain nodes. It also commits it to persistent storage so mispriced/lost transactions will be re-priced and re-submitted.

- The transaction is processed by the World ID contract, which verifies it came from the sequencer. The initial root must match the current one, and the contract hashes the provided public keys. Public keys are available as transaction calldata.

- The Groth16-verifier contract checks the integrity of the ZKP. An operation takes only about 350k gas for a batch of 100.

- The old root is deprecated (but still valid for some grace period), and the new root is set to the contract.

The ZKP guarantees the integrity of the Merkle tree and data availability. What sets it apart from the ideal zk-rollup model is the lack of validator decentralization; the implementation uses a single fixed-batch submitter.

After enrollment is complete, the user can use the World App autonomously. At that point, the system works in a decentralized, trustless and anonymous manner.

Details regarding trust assumptions and limitations for World ID can be found in the limitations section.

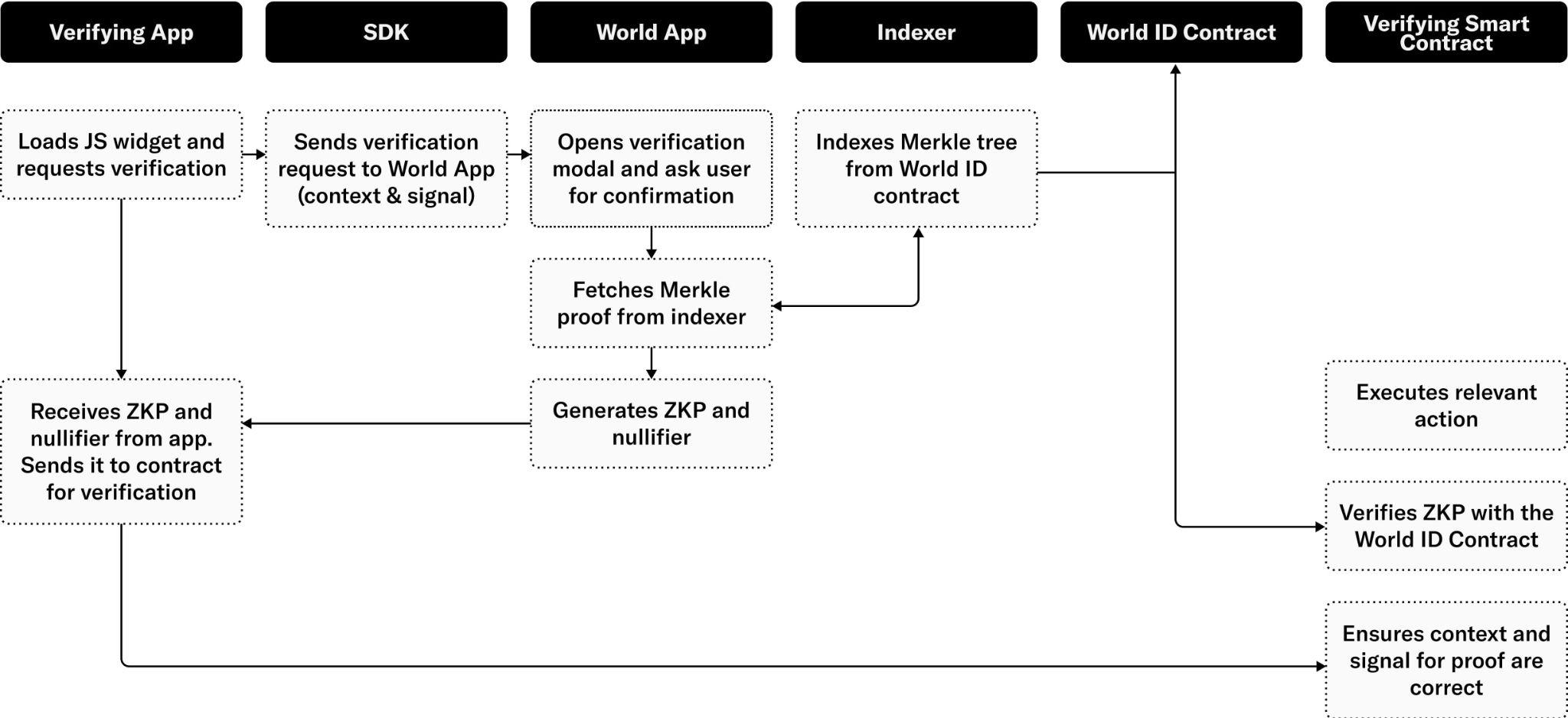

Verification Process

Developers can integrate World ID as part of a transaction (Web3) or request (Web2) through the World ID SDK:

- A verification process is triggered through one of the in-app options or through a QR code presented by a third-party application. Scanning the QR code opens the application. A verification request contains a context, message, and target. The context uniquely identifies the scope of the Sybil protection (e.g. the third-party application, a vote on a particular proposal, etc.). The message encodes application-specific business logic related to the transaction. The target identifies the receiving party of the claim (i.e. callback).

- The user inspects the verification details and decides to proceed using World ID. It is important that the user knows that the context is the intended one to avoid man-in-the-middle attacks.

- To generate a ZKP, the application needs a recent Merkle inclusion proof from the contract. It is possible to do this in a decentralized manner by fetching the tree from the contract, but at the scale of a billion users this requires downloading several gigabytes of data — prohibitive for mobile applications. To solve this, an indexing service that retrieves a recent Merkle inclusion proof on behalf of the application was developed. To use the service, the application provides its public key, and the indexer replies with an inclusion proof. Since this allows the indexer to associate the requester’s IP address to their public key, this constitutes a minor breach of privacy. One possible means to mitigate this is by using the services through an anonymization network1. The indexing service today is part of the sign up sequencer infrastructure and is open source, and anyone can run their own instance in addition to the one provided.

- The application can now compute a ZKP using a current Merkle root, the context, and the message as public inputs; the nullifier hash as public output; and the private key and Merkle inclusion proof as private inputs2. Note that no identifying information is part of the public inputs. The proof has three guarantees: 1) the private key belongs to the public key, hence proving ownership of the key, 2) the inclusion proof correctly shows that the public key is a member of the Merkle tree identified by the root, and 3) the nullifier is correctly computed from the context and the private key. The proof is then sent to the verifier.

- The verifier dApp will receive the proof and relay it to its own smart contract or backend for verification. When the verification happens from a backend in the case of Web2, the backend usually contacts a chain-relayer service as the proof inputs need to be verified with on-chain data.

- The verifier contract makes sure the context is the correct one for the action. Failing to do so leads to replay attacks where a proof can be reused in different contexts. The verifier will then contact the World ID contract to make sure the Merkle root and ZKP are correct. The root is valid if it is the current root or recently was the current root. It is important to allow for slightly stale roots so the tree can be updated without invalidating transactions currently in flight. In a pure append-only set, the roots could in principle remain valid indefinitely, but this is disallowed for two reasons: First, as the tree grows, the anonymity set grows as well. By forcing everyone to use similar recent roots, anonymity is maximized. Second, in the future one might implement key recovery, rotation, and revocation, which would invalidate the append-only assumption.

- At this point, the verifier is assured that a valid user is intending to do this particular action. What remains is to check the user has not done this action before. To do this, the nullifier from the proof is compared to the ones seen before3. This comparison happens on the developer side. If the nullifier is new, the check passes and the nullifier is added to the set of already seen ones.

- The verifier can now carry out the action using the message as input. They can do so with the confidence that the initiation was by a confirmed human being who has not previously performed an action within this context.

As the above process shows, there is a decent amount of complexity. Some of this complexity is handled by the wallet and Worldcoin-provided services and contracts, but a big portion will be handled by third-party developed verifiers. To make integrating World ID as straightforward and safe as possible, an easy-to-use SDK containing example projects and reusable GUI components was developed, in addition to lower-level libraries.

Conceptually, the hardest part for new developers is the nullifiers. This is a standard solution to create anonymity, but it is little known outside of cryptography. Nullifiers provide proof that a user has not done an action before. To accomplish this, the application keeps track of nullifiers seen before and rejects duplicates. Duplicates indicate a user attempted to do the same action twice. Nullifiers are implemented as a cryptographic pseudo-random function (i.e. hash) of the private key and the context. Nullifiers can be thought of as context-randomized identities, where each user gets a fresh new identity for each context. Since actions can only be done once, no correlations exist between these identities, preserving anonymity. One could imagine designs where duplicates aren’t rejected but handled in another way, for example limiting to three tries, or once per epoch. But, because such designs correlate a user’s actions, they are recommended against. The same result can instead be accomplished using distinct contexts (i.e. provide three contexts, or one for each epoch).

For example, suppose the goal is that all humans should be able to claim a token each month. To do this, a verifier contract is deployed that can also send tokens. As context, a combination of the verifier-contract address and the current time rounded to months are used. This way each user can create a new claim each month. As the message, the address where the user wants to receive the token claim is used. To make this scalable, it is deployed on an Ethereum L2 and uses the World ID state bridge.

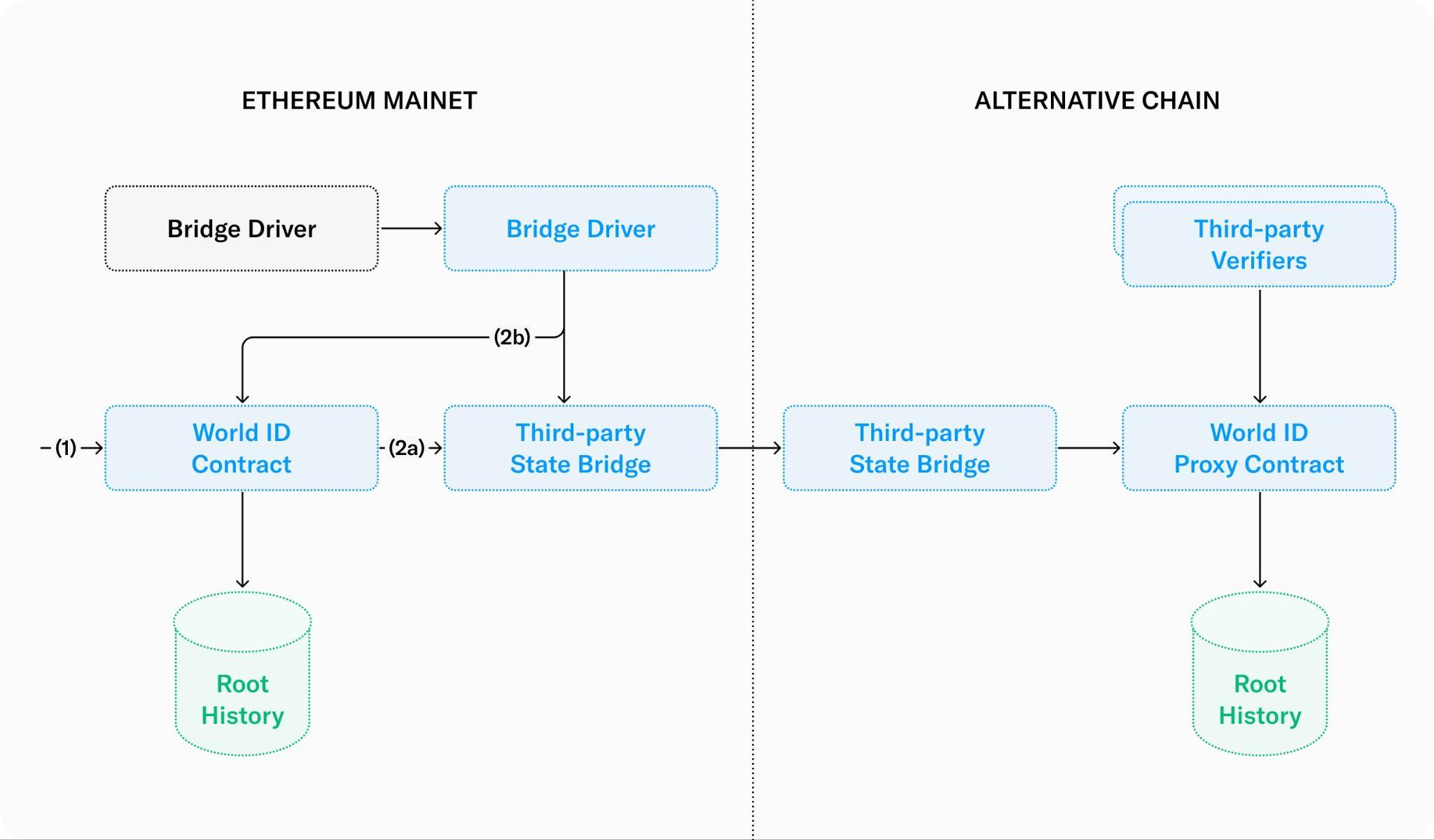

Multi-chain Support

While it’s important that World ID has its security firmly grounded, it is intended to be usable in many places. To make World ID multi-chain, the separation between enrollment and verification is leveraged. Enrollment will happen on Ethereum (thus guaranteeing security of the system), but verification can happen anywhere. Verification is a read-only process from the perspective of the World ID contract, so a basic state-replication mechanism will work.

- Enrollment happens as before on Ethereum, but now each time the root history is updated a replication process is triggered.

- The replication is initiated by the World ID contract itself (route 2a) or by an external service that triggers a contract to read the latest roots from the contract (route 2b). Either way, the latest roots are pushed as messages to a third-party state bridge for the target chain.